Biostatistics — MCQs

On this page

A clinical trial is stopped early after interim analysis shows the new treatment is superior to standard care (p = 0.001). However, only 60% of planned enrollment was completed. Analyze the implications of early trial termination.

A meta-analysis of 20 studies shows heterogeneity (I² = 85%) in results examining the effectiveness of a new treatment. The overall effect size is statistically significant (p < 0.01). Analyze the implications of this heterogeneity for clinical practice.

A randomized controlled trial of a new antidepressant shows statistically significant improvement (p = 0.02) compared to placebo. However, the effect size is small (Cohen's d = 0.2), and the confidence interval barely excludes the null. Analyze the clinical implications of these findings.

A research study on a new diabetes medication reports the following: Control group event rate = 20%, Treatment group event rate = 15%, Relative risk = 0.75, Number needed to treat = 20. Analyze these results and determine what they indicate about the treatment's clinical significance.

A clinical trial comparing two medications reports a relative risk of 0.75 (95% CI: 0.60-0.90) for the primary outcome. How should this result be interpreted?

A researcher conducts a study comparing two treatments and finds a p-value of 0.08. The study had 80% power to detect a clinically meaningful difference. How should this result be interpreted?

A diagnostic test has a likelihood ratio positive (LR+) of 10 and likelihood ratio negative (LR-) of 0.1. A patient has a pre-test probability of 20% for the disease. If the test is positive, what is the approximate post-test probability?

A research study found a statistically significant result with p = 0.03. The confidence interval for the effect size is 0.5 to 2.1. How should this result be interpreted?

A new screening test for disease X has a sensitivity of 90% and specificity of 80%. In a population where the prevalence of disease X is 5%, what is the positive predictive value of this test?

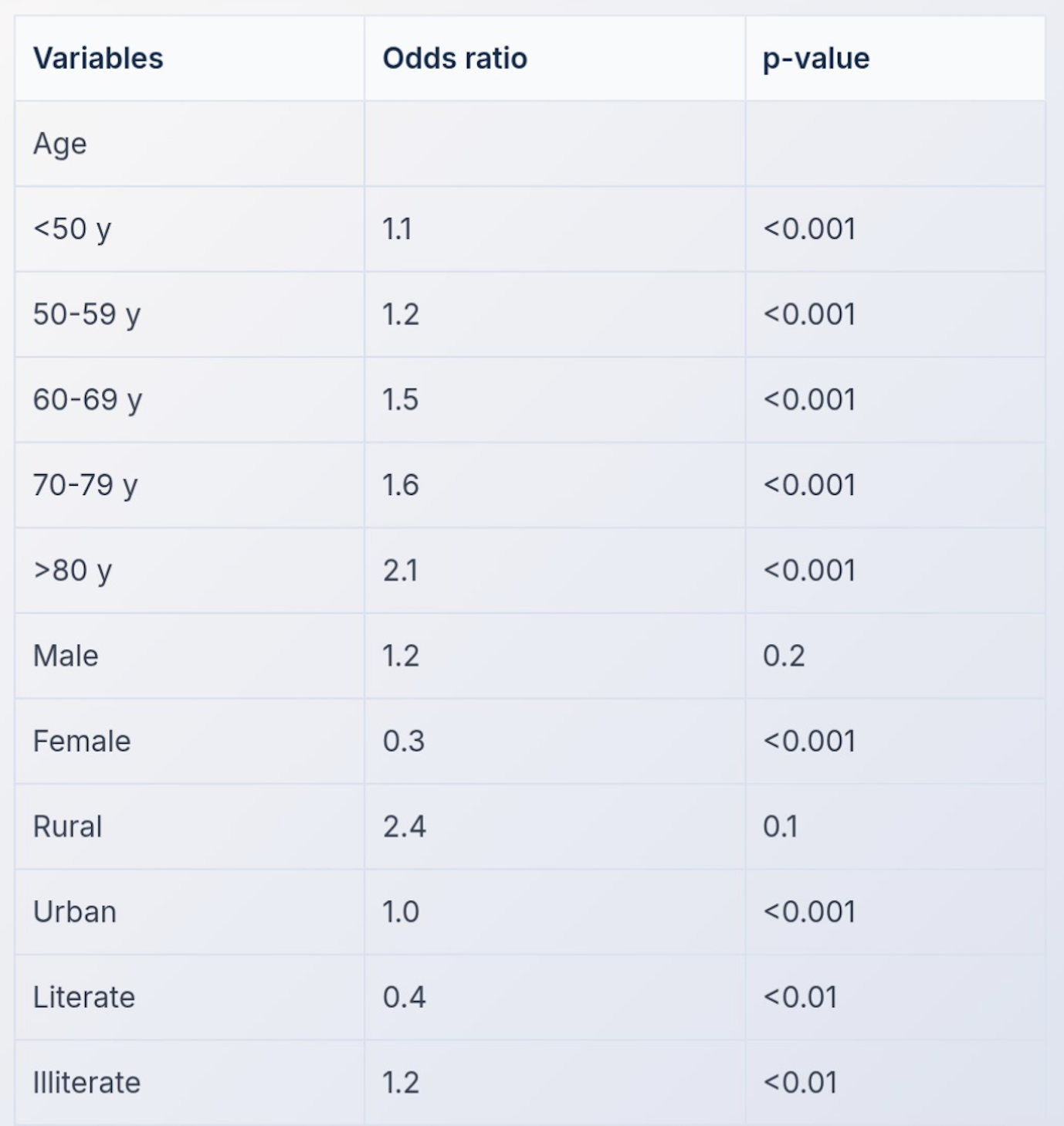

A multivariate analysis was conducted to examine the relationship between risk of developing blindness and age. The results are shown in the table below. Which of the following is true?

Practice by Chapter

Collection and Presentation of Data

Practice Questions

Measures of Central Tendency

Practice Questions

Measures of Dispersion

Practice Questions

Normal Distribution

Practice Questions

Sampling Methods

Practice Questions

Sample Size Calculation

Practice Questions

Hypothesis Testing

Practice Questions

Tests of Significance

Practice Questions

Correlation and Regression

Practice Questions

Survival Analysis

Practice Questions

Multivariate Analysis

Practice Questions

Statistical Software in Research

Practice Questions

Want unlimited practice?

Get full access to all questions, explanations, and performance tracking.

Scan to download app