Biostatistics — MCQs

On this page

In the estimation of statistical probability, Z score is applicable to:

The two important values necessary for describing the variation in a series of observations are:

Which one of the following tests should be applied to compare mean haemoglobin level of two groups of antenatal mothers?

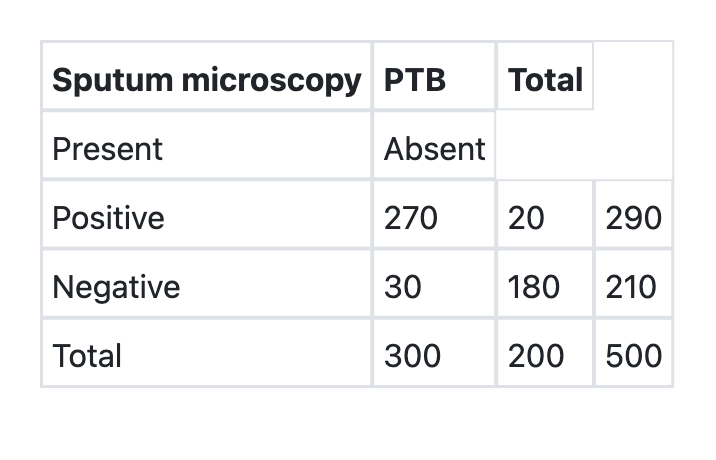

What is the specificity of sputum microscopy in detection of Pulmonary Tuberculosis (PTB) as per the information given below?

A research study comparing two groups shows a statistically significant difference (p=0.04) but the confidence interval is very wide (0.5 to 4.2). How should this result be interpreted?

A randomized controlled trial of a new diabetes medication is stopped early when interim analysis shows significant benefit. The trial enrolled only 60% of the planned participants. What is the most important consideration about this early termination?

A meta-analysis of 10 studies shows significant benefit from a new treatment (p=0.001), but there is high heterogeneity between studies (I² = 78%). Individual studies show conflicting results. How should this evidence be interpreted?

A clinical trial shows that a new cardiac medication reduces mortality from 20% to 15%. The relative risk is 0.75 and the number needed to treat is 20. How should the clinical significance be interpreted?

A researcher wants to study a new antidepressant in patients with severe depression. Standard antidepressants are available and effective. The pharmaceutical company insists on a placebo-controlled design, arguing it provides the clearest evidence of efficacy. What is the most appropriate study design?

A surgeon notices that their complication rates are higher than expected. Hospital administration suggests this might be due to taking more complex cases. Analyze the statistical concept that explains this phenomenon.

Practice by Chapter

Collection and Presentation of Data

Practice Questions

Measures of Central Tendency

Practice Questions

Measures of Dispersion

Practice Questions

Normal Distribution

Practice Questions

Sampling Methods

Practice Questions

Sample Size Calculation

Practice Questions

Hypothesis Testing

Practice Questions

Tests of Significance

Practice Questions

Correlation and Regression

Practice Questions

Survival Analysis

Practice Questions

Multivariate Analysis

Practice Questions

Statistical Software in Research

Practice Questions

Want unlimited practice?

Get full access to all questions, explanations, and performance tracking.

Scan to download app