Repeated Measures & Power - The Dynamic Duo

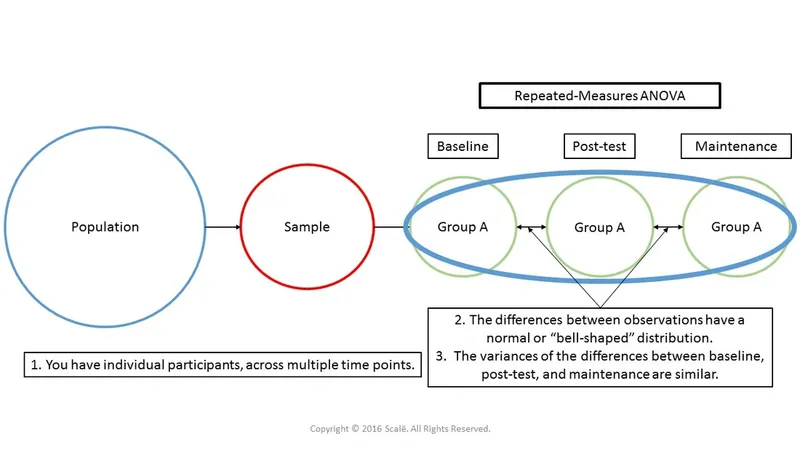

- Each subject serves as their own control, measured multiple times (e.g., before, during, and after an intervention).

- This design masterfully controls for inter-individual variability, which significantly ↑ statistical power.

- Consequently, it requires a smaller sample size to detect a specific effect size compared to between-subject designs.

- Power is directly related to the correlation ($ρ$) between repeated measurements; higher correlation means greater power.

⭐ In clinical trials, a crossover design is a type of repeated measures study. All subjects receive all treatments, just in a different order, reducing bias and required sample size.

Power Factors - What's the Driver?

Power in repeated measures designs hinges on factors that control variability and effect magnitude. Understanding these levers is key to efficient study design.

- Effect Size ($δ$): As the magnitude of the treatment effect or difference increases, power ↑.

- Sample Size ($N$): The most common lever. ↑ $N$ → ↑ power.

- Number of Measurements ($k$): More observations per subject (↑ $k$) → ↑ power by improving the estimate of within-subject variance.

- Correlation ($ρ$): Higher correlation between repeated measures → ↑ power. This is a unique feature; it means subjects are consistent, reducing error variance.

⭐ In repeated measures ANOVA, the error term is reduced by the covariance between measurements. High positive correlation ($ρ$) between time points drastically lowers the denominator of the F-statistic, boosting power without needing more subjects.

Sample Size Quest - Sizing Up Your Study

- Fewer subjects are needed vs. between-subjects designs as each participant serves as their own control, reducing error variance.

- Key inputs for calculation: α-level (e.g., 0.05), Power (1-β, target >0.8), expected effect size, and number of repeated measurements.

- Crucially, the correlation (ρ) between repeated measures is a required input. A higher correlation significantly reduces the needed sample size.

- Always inflate the calculated sample size to account for anticipated dropouts (attrition).

⭐ In repeated measures designs, the correlation (ρ) between time points is paramount. Higher correlation means measurements are more consistent within a person, drastically reducing the sample size needed to detect an effect.

Design Pitfalls - Navigating the Maze

- Carryover Effects: A prior treatment's effect lingers, influencing the next.

- Solution: Implement an adequate washout period.

- Order Effects: The sequence of treatments impacts outcomes (e.g., practice, fatigue).

- Solution: Counterbalance or randomize treatment order.

- Sphericity Violation: Unequal variances of differences between measures; inflates Type I error.

- Checked via Mauchly's Test.

- Correction: Use Greenhouse-Geisser or Huynh-Feldt adjustments.

- Missing Data: Attrition reduces power and can introduce bias.

- ⚠️ Avoid biased methods like Last Observation Carried Forward (LOCF).

⭐ Violating sphericity inflates the F-statistic in repeated measures ANOVA, increasing the risk of a false-positive (Type I error). Always check Mauchly's test.

High‑Yield Points - ⚡ Biggest Takeaways

- Repeated measures designs boost statistical power by minimizing within-subject variability.

- Each participant acts as their own control, which isolates the intervention's true effect.

- This design typically requires fewer subjects than independent group studies for equivalent power.

- Power is strongly influenced by the correlation between measurements; higher correlation generally increases power.

- The number of repeated measurements also impacts power; more measurements can increase it.

- Violations of sphericity can inflate Type I error rates, requiring statistical corrections.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more