The P-Value Problem - Heresy or Hype?

-

Core Issues with P-values:

- The p < 0.05 threshold is arbitrary and can lead to "p-hacking" (manipulating data to achieve significance).

- They don't measure effect size or clinical importance; a tiny, trivial effect can be statistically significant with a large sample size.

- A non-significant result (e.g., p = 0.06) doesn't prove the null hypothesis is true.

-

Key Alternatives & Complements:

- Confidence Intervals (CIs): Provide a range of plausible values for the true effect size, giving a better sense of clinical meaning.

- Effect Sizes: (e.g., Cohen's d, Odds Ratio) directly quantify the magnitude of an observed phenomenon.

- Bayesian Statistics: Updates the probability of a hypothesis as more evidence becomes available.

⭐ The American Statistical Association (ASA) advises against making scientific conclusions or policy decisions based solely on whether a p-value crosses a specific threshold. Context and effect size are critical.

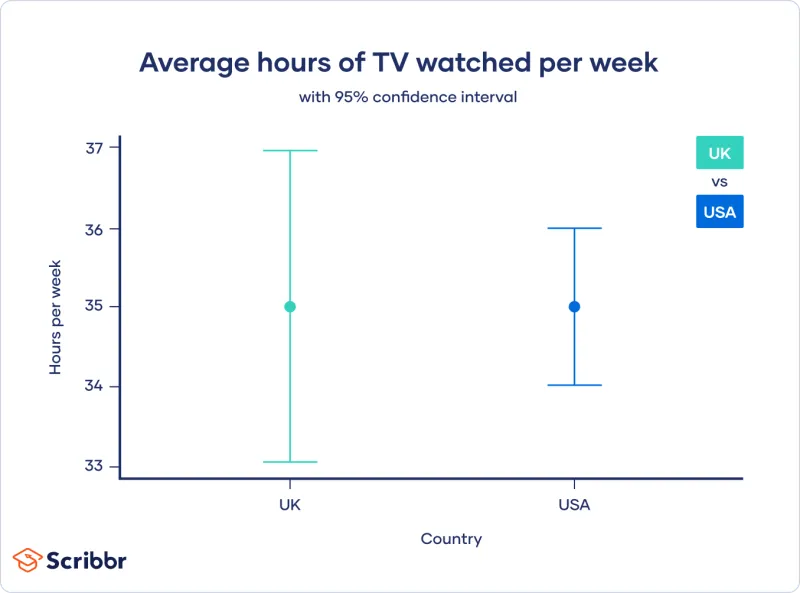

Confidence Intervals - The Better Story

CIs provide a more complete picture than a p-value by estimating a range of plausible values for the true population effect.

-

What It Is: A range of values that likely contains the true population parameter. A 95% CI means we are 95% confident the true value lies within this range.

-

Precision & Effect Size:

- Narrow CI → High precision (more certain estimate).

- Wide CI → Low precision (less certain, often from small samples).

- The CI's position and width help assess both statistical and clinical significance.

⭐ If a 95% CI for a mean difference includes 0, or for a ratio (e.g., RR, OR) includes 1, the result is not statistically significant at the p < 0.05 level.

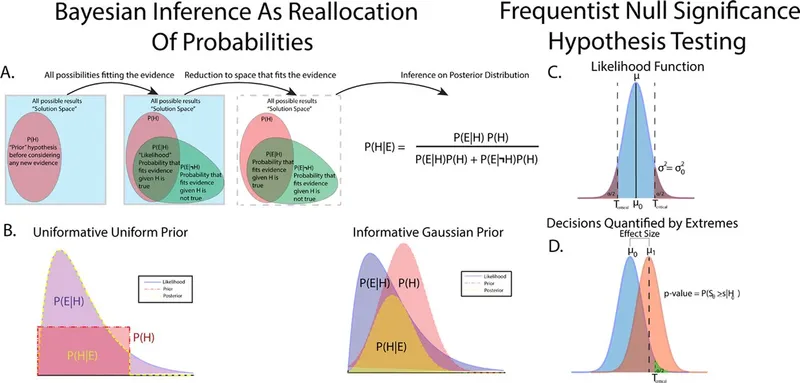

Bayesian Alternatives - A Different Logic

- Shifts focus from the p-value's $P(\text{data}|\text{hypothesis})$ to the more intuitive question: $P(\text{hypothesis}|\text{data})$.

- Combines prior beliefs ($P(\text{Hypothesis})$) with study data (likelihood) to generate an updated posterior probability.

- Bayes' Theorem: $P(H|D) = \frac{P(D|H) \times P(H)}{P(D)}$

- Interpretation: Avoids the binary "significant/non-significant" threshold.

- Results are often given as a Bayes Factor (BF), which quantifies the strength of evidence for one hypothesis over another.

- A BF of 5 means the data are 5 times more likely under the alternative hypothesis than the null.

⭐ A key advantage is the ability to provide evidence for the null hypothesis (e.g., confirming no effect), which a p-value can never do-it can only fail to reject it.

Effect Sizes & Pre-Registration - Practical Steps

-

Effect Size: Measures the magnitude of an intervention's effect, moving beyond the p-value's binary significance. It quantifies the clinical importance of a finding.

- Examples: Cohen's d, odds ratio (OR), relative risk (RR).

- A small p-value with a small effect size may be statistically significant but clinically meaningless.

-

Pre-registration: Publicly registering your study's hypothesis and analysis plan before data collection.

- Goal: Increases transparency and combats publication bias and p-hacking.

⭐ Pre-registering a study protocol on platforms like ClinicalTrials.gov is often a prerequisite for publication in major medical journals, enhancing research credibility.

High-Yield Points - ⚡ Biggest Takeaways

- P-values are often misinterpreted as the probability the null hypothesis is true.

- They don't measure the effect size or clinical importance.

- A non-significant p-value isn't proof for the null hypothesis; it could just be low power.

- P-hacking (data dredging) leads to spurious findings and inflated Type I error.

- Confidence intervals are preferred; they provide the effect size and precision.

- Bayesian methods offer an alternative by directly testing the hypothesis probability.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more