P-Value Problems - The Frequentist's Flaws

- Arbitrary Cutoff: Relies on a fixed threshold (e.g., p < 0.05), a convention, not a constant of nature.

- Ignores Effect Size: A tiny, clinically irrelevant effect can be statistically significant with a large sample size.

- Prone to "P-hacking": Manipulating data or statistical tests to achieve a desired significant p-value.

⭐ A p-value is the probability of observing the current data (or more extreme) if the null hypothesis were true. It is NOT the probability of the null hypothesis being true.

Bayesian Basics - Updating Your Beliefs

- Core Idea: Updates prior beliefs (prior probability) with new evidence (likelihood) to form an updated belief (posterior probability). It asks: "How likely is my hypothesis, given the data?"

- Bayes' Theorem: $P( ext{Hypothesis}|\text{Data}) = \frac{P(\text{Data}|\text{Hypothesis}) \times P(\text{Hypothesis})}{P(\text{Data})}$

- Prior: Your belief before seeing the data.

- Likelihood: The evidence from your study.

- Posterior: Your updated belief.

- Contrasts with frequentist methods, which don't incorporate prior beliefs.

⭐ High-Yield: The choice of the prior can significantly influence the posterior probability. A strong prior requires more compelling evidence to shift the belief.

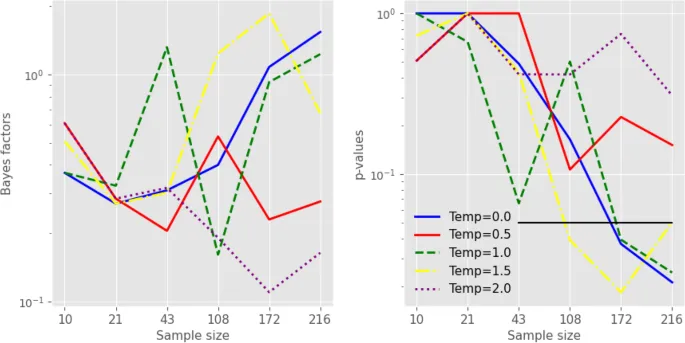

Bayes Factor - The Evidence Scale

- The Bayes Factor (BF) quantifies how much more likely the data are under one hypothesis compared to another. It directly compares the evidence for the alternative hypothesis (H₁) versus the null hypothesis (H₀).

- Formula: $BF_{10} = \frac{P(\text{Data}|H_1)}{P(\text{Data}|H_0)}$

| Bayes Factor (BF₁₀) & Strength of Evidence | | :--- | :--- | | > 100 | Extreme evidence for H₁ | | 30 - 100 | Very Strong evidence for H₁ | | 10 - 30 | Strong evidence for H₁ | | 3 - 10 | Moderate evidence for H₁ | | 1 - 3 | Anecdotal evidence for H₁ |> ⭐ Unlike p-values, a BF < 1 (e.g., 1/10) provides evidence for the null hypothesis, suggesting the data are more probable under H₀.

Credible Intervals - A More Believable Range

- A Bayesian alternative to the frequentist confidence interval (CI).

- Represents a range of values where the true parameter has a certain probability of lying, given the data and prior beliefs.

- Derived from the posterior probability distribution (prior beliefs + data likelihood).

- For a 95% credible interval, one can state there is a 95% probability that the true value of the parameter is within this interval.

⭐ The key advantage is its direct, intuitive interpretation, which is how many mistakenly interpret frequentist confidence intervals.

Frequentist vs. Bayesian - The Final Showdown

| Feature | Frequentist Approach | Bayesian Approach |

|---|---|---|

| Core Idea | Probability as long-run frequency. Parameters are fixed constants. | Probability as a degree of belief. Parameters are random variables. |

| Key Metric | P-value: Pr(data | H₀ true). If p < 0.05, reject H₀. | Bayes Factor (BF): Compares evidence for H₁ vs. H₀. |

| Interval | 95% Confidence Interval: Captures true parameter in 95% of repeated samples. | 95% Credible Interval: 95% probability the true parameter lies in this range. |

| Input | Data only. | Data + Prior belief. |

| Output | P-values & CIs. | Posterior probability distributions. |

High‑Yield Points - ⚡ Biggest Takeaways

- Bayesian inference updates prior probability with new data to create a posterior probability.

- The Bayes factor (BF) is the ratio of the likelihood of two competing hypotheses; it quantifies evidence.

- A BF > 1 indicates evidence for the alternative hypothesis; a BF < 1 supports the null hypothesis.

- Unlike p-values, Bayes factors can provide direct evidence for the null hypothesis, not just a failure to reject it.

- This avoids the common fallacy of treating non-significance as proof of no effect.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more