Minimally Important Difference - Clinically Cool

- Definition: The smallest change in a treatment outcome that an individual patient would identify as important or beneficial, justifying a change in management.

- Purpose: Bridges the gap between statistical significance (p-value) and clinical relevance. A statistically significant finding might not be clinically meaningful if it fails to meet the MID.

- Key Contrast:

- Statistical Significance: Is the effect real? (p-value)

- Clinical Importance (MID): Is the effect meaningful to patients?

- Determination Methods:

- Anchor-based: Preferred method. Correlates changes in an outcome measure (e.g., pain score) with a patient's own global rating of improvement.

- Distribution-based: Uses statistical properties, e.g., defining the MID as 0.5 times the baseline standard deviation of the outcome score.

⭐ A trial might find a new drug significantly lowers cholesterol (p < 0.05), but if the average drop is only 2 mg/dL, it likely falls below the MID and is clinically trivial.

Sample Size Calculation - Power Trip

Calculating the necessary sample size is a critical first step in study design to ensure a trial is appropriately powered to detect a meaningful effect. The goal is to have a large enough sample to find a true difference if one exists, but not so large as to be wasteful of resources.

- Key Determinants of Sample Size (n):

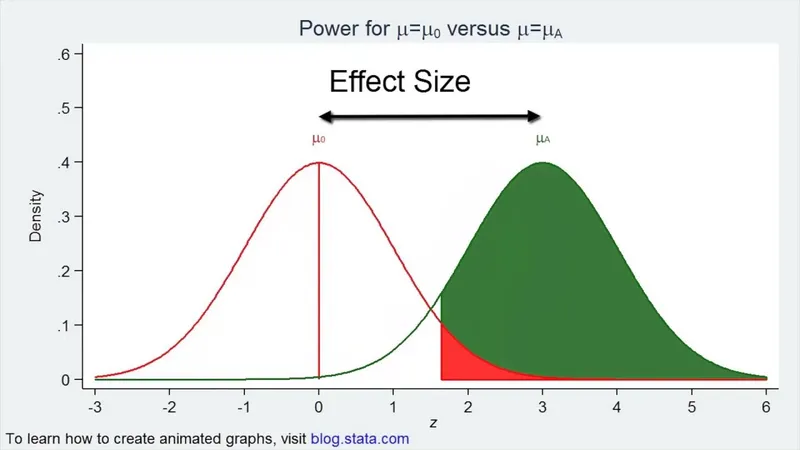

- Power (1-β): The probability of detecting a true effect. Higher desired power (e.g., 90% vs. 80%) requires a ↑ sample size.

- Significance Level (α): The probability of a Type I error. A stricter (lower) α (e.g., 0.01 vs. 0.05) requires a ↑ sample size.

- Standard Deviation (σ): Higher data variability requires a ↑ sample size to detect a true signal.

- Minimal Clinically Important Difference (δ): The smallest effect size of interest. Detecting a smaller, more subtle difference requires a ↑ sample size.

⭐ To detect an effect size (δ) that is half as large, you must quadruple (4x) the required sample size. This is because sample size is inversely proportional to the square of the effect size ($n ∝ 1/δ²$).

📌 Mnemonic: For a bigger sample size, you need more POWER.

Determining MID - Anchor Management

- Anchor-based methods are a primary way to define a clinically meaningful Minimally Important Difference (MID).

- This technique correlates changes in a Patient-Reported Outcome (PRO) score with an external, easily interpretable "anchor" question.

-

The Anchor: A global rating of change.

- Example: "Overall, how has your condition changed?"

- Responses are categorical (e.g., "Slightly better," "No change").

-

Process: The mean change in the PRO score for the group that reports a "minimally important" improvement on the anchor is defined as the MID.

⭐ High-Yield: The anchor must correlate well with the PRO measure but be conceptually distinct. A poor anchor might ask about a specific symptom already included in the PRO, leading to circular logic.

High‑Yield Points - ⚡ Biggest Takeaways

- The Minimal Clinically Important Difference (MCID) is the smallest effect that a patient perceives as beneficial, guiding interpretation of clinical relevance.

- It bridges the gap between statistical significance (p-value) and practical importance to patients.

- A result is clinically meaningful if the confidence interval for the effect lies entirely above the MCID.

- Used prospectively in sample size calculation; a smaller MCID demands a larger sample size.

- It is an anchor-based or distribution-based value, not an arbitrary statistical cutoff.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more