Inferential Statistics - The Hypothesis Game

- Hypothesis Testing: Aims to determine if there's enough evidence to reject a null hypothesis ($H_0$).

- Null Hypothesis ($H_0$): States no association or difference (e.g., new drug = placebo).

- Alternative Hypothesis ($H_a$): States an association or difference exists.

- p-value: Probability of obtaining observed results, assuming $H_0$ is true. If p ≤ $\alpha$ (usually 0.05), results are statistically significant.

- Confidence Interval (CI): Range of values for a population parameter. A 95% CI not containing the null value (e.g., 1 for RR/OR) is significant.

⭐ A p-value < 0.05 is significant, but a CI provides more info: the effect size's range and precision.

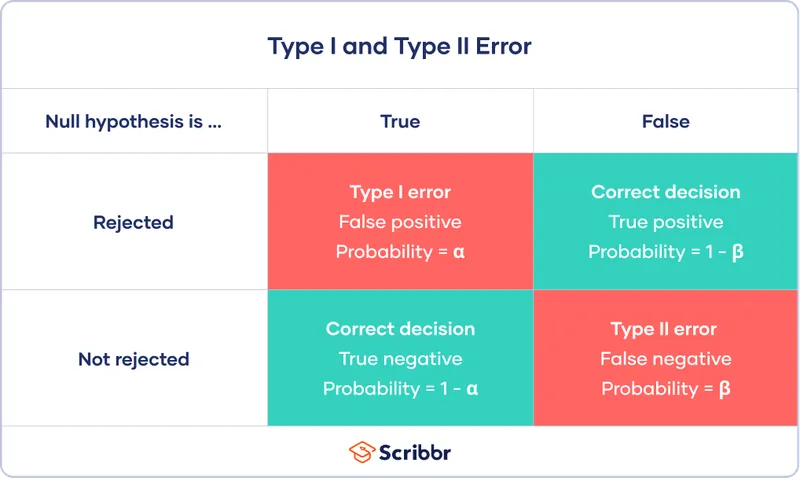

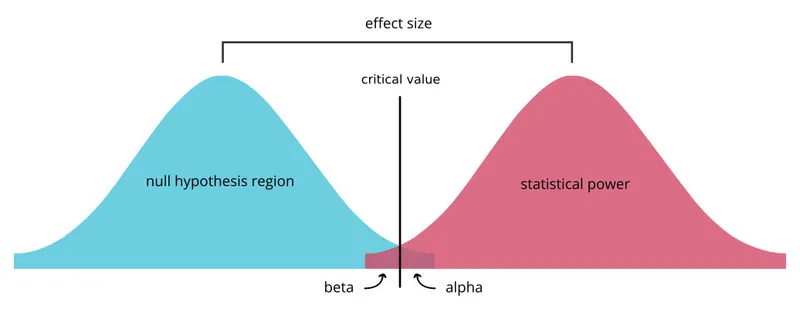

- Errors & Power:

- Type I Error ($\alpha$): False positive. Rejecting a true $H_0$. 📌 Stating a difference exists when it doesn't.

- Type II Error ($\beta$): False negative. Failing to reject a false $H_0$. 📌 Missing a real difference.

- Power ($1-\beta$): Probability of detecting a true effect. Increased by ↑ sample size.

Errors & Power - Dodging Statistical Traps

- Type I Error (α): False positive. Rejecting a true null hypothesis (H₀). Probability = p-value.

- α is the risk of a Type I error you're willing to accept (e.g., 0.05).

- Type II Error (β): False negative. Failing to reject a false H₀.

- Power: Probability of detecting a true effect. Power = $1 - β$. Standard is 0.80.

⭐ Increasing sample size (n) is the most common method to increase a study's power.

Statistical Tests - The Right Tool for the Job

- t-test: Compares means of 2 groups.

- ANOVA: Compares means of ≥3 groups.

- Chi-square ($χ^2$): Compares proportions of ≥2 categorical variables.

- Pearson Correlation (r): Measures linear association between 2 continuous variables.

⭐ ANOVA is preferred over multiple t-tests for comparing >2 groups because it reduces the cumulative risk of a Type I (α) error.

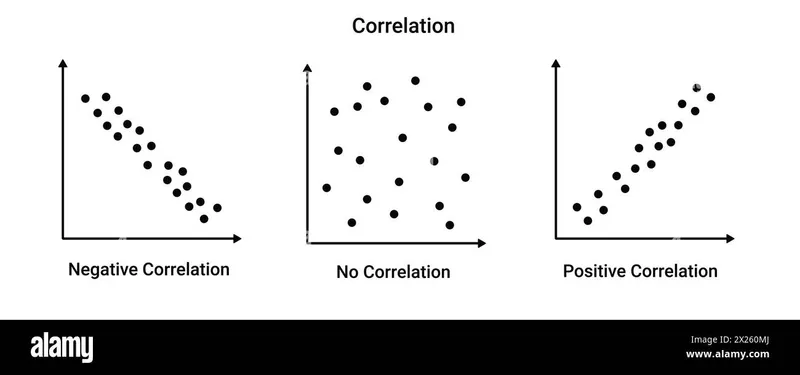

Correlation & Regression - Just How Related?

-

Correlation: Measures the strength and direction of a linear relationship between two continuous variables.

- Pearson Coefficient ($r$): Value is between -1 and +1.

- +1: Perfect positive correlation.

- -1: Perfect negative correlation.

- 0: No linear correlation.

- Coefficient of Determination ($r^2$): Proportion of variance in one variable explained by the other. An $r$ of 0.7 means $r^2 = 0.49$, so 49% of the variance is shared.

- Pearson Coefficient ($r$): Value is between -1 and +1.

-

Linear Regression: Predicts a dependent variable's value based on an independent variable.

⭐ Correlation does not imply causation. A significant p-value (< 0.05) suggests the observed relationship is unlikely due to chance, but doesn't prove one variable causes the other.

High‑Yield Points - ⚡ Biggest Takeaways

- p-value < 0.05 means results are statistically significant, rejecting the null hypothesis.

- Confidence intervals are significant if they exclude 0 for mean differences or 1 for ratios (OR/RR).

- Type I error (α) is a false positive-rejecting a true null hypothesis.

- Type II error (β) is a false negative-failing to reject a false null hypothesis.

- Power (1-β) is the probability of detecting a true difference; it increases with sample size.

- T-tests compare means of 2 groups; ANOVA for ≥3 groups; chi-square for categorical data.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more