Core Concepts - The Power Players

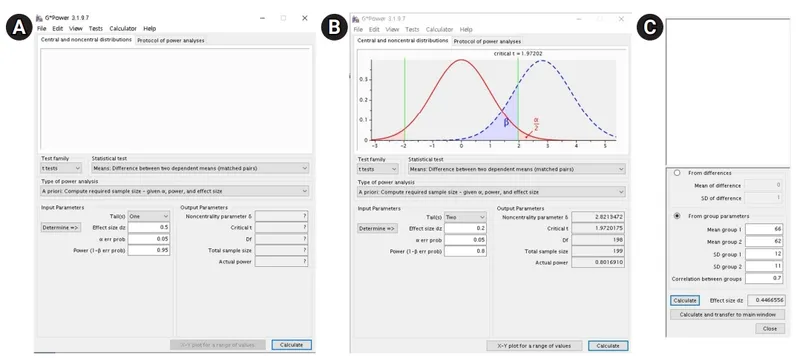

- Sample Size (n) is a function of four key variables:

- α (Alpha/Type I Error): Probability of a false positive. Conventionally set at 0.05.

- β (Beta/Type II Error): Probability of a false negative. Conventionally set at 0.20.

- Power (1-β): Probability of detecting a true effect. Desired level is typically ≥80%.

- Effect Size (d): Magnitude of the difference to be detected. Smaller effects need larger samples.

⭐ Halving the effect size you want to detect requires quadrupling the sample size. The relationship is an inverse square: $n \propto 1/d^2$.

Continuous Outcomes - Mean Feats

- Objective: To find the sample size needed to detect a specific difference between two means.

- Core Formula: For two independent sample means:

$$ n \approx \frac{2\sigma^2(Z_{\alpha/2} + Z_\beta)^2}{d^2} $$

- n: Sample size per group.

- d: Smallest difference in means you want to detect.

- σ: Population standard deviation (estimated from prior studies).

- Zα/2: Z-score for desired significance level (e.g., 1.96 for α = 0.05).

- Zβ: Z-score for desired power (e.g., 0.84 for 80% power).

⭐ To detect a difference half as small (d/2), you must quadruple (4x) the sample size.

Categorical Outcomes - Proportion Power

-

Objective: Calculate sample size (n) per group to detect a specified difference between two proportions ($p_1$, $p_2$) with desired power.

-

Core Components:

- α (alpha): Significance level (e.g., 0.05).

- Power (1-β): Probability of detecting a true effect (e.g., 0.80).

- $p_1$, $p_2$: Estimated proportions in the two groups.

- Effect Size: The clinically significant difference ($|p_1 - p_2|$).

-

Sample Size Formula (per group): $$ n = \frac{2\bar{p}(1-\bar{p})(Z_{\alpha/2} + Z_{\beta})^2}{(p_1 - p_2)^2} $$

- Where $\bar{p} = (p_1+p_2)/2$ is the average proportion.

-

Key Relationships:

- Sample size (n) ↑ as power ↑, significance ↓ (α ↓), or effect size ↓.

⭐ High-Yield Pearl: The required sample size is maximal for a given difference when the average proportion ($\bar{p}$) is 0.5 (50%), as this value represents maximum variance. Detecting small differences requires much larger samples.

Observational Studies - Cohort & Case Calculations

-

Goal: Determine the minimum sample size to detect a statistically significant association (e.g., a specific Relative Risk or Odds Ratio).

-

Core Inputs for Calculation:

- α (alpha): Probability of Type I error (false positive). Conventionally set at 0.05.

- β (beta): Probability of Type II error (false negative). Set at 0.20 or 0.10.

- Power (1-β): Probability of detecting a true effect. Usually 80% or 90%.

- Effect Size: The minimum expected difference (e.g., RR, OR) you want to detect.

- Prevalence/Incidence: Expected frequency of the outcome (cohort) or exposure (case-control) in the comparison group.

⭐ In case-control studies, increasing the number of controls per case (e.g., 2:1, 3:1) boosts study power. This benefit significantly diminishes beyond a 4:1 ratio.

High‑Yield Points - ⚡ Biggest Takeaways

- Sample size is directly proportional to the desired power (1-β) and population variance (σ²).

- It is inversely proportional to the effect size and the significance level (α).

- To ↑ power or detect a smaller effect size, you must ↑ sample size.

- Halving the effect size requires quadrupling the sample size to maintain the same power.

- Case-control sample size is influenced by the prevalence of exposure.

- Cohort/RCT sample size is driven by the expected incidence of the outcome.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more