Factors Affecting Power - The Core Quartet

- Statistical Power: The probability of correctly rejecting a false null hypothesis (H₀), i.e., detecting a true effect. It is the complement of a Type II error (Power = $1 - \beta$).

| Factor | Impact of ↑ Increase on Power | Rationale |

|---|---|---|

| Significance Level ($\alpha$) | ↑ Power | ↑ $\alpha$ (e.g., from 0.01 to 0.05) creates a larger rejection region, making it easier to find a significant result. |

| Sample Size ($n$) | ↑ Power | A larger sample provides a more precise estimate of the population parameter, reducing standard error. |

| Effect Size ($\Delta$) | ↑ Power | A larger difference or stronger relationship is inherently easier to detect. |

| Variability ($\sigma$) | ↓ Power | ↑ standard deviation leads to more overlap between distributions, obscuring the true effect. |

Power & Error Rates - A Balancing Act

-

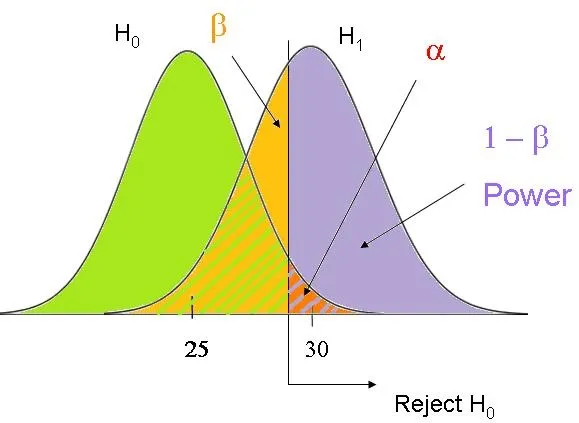

Power is the probability of correctly rejecting a false null hypothesis (detecting a true effect).

-

It's directly related to the Type II error rate ($\beta$): $Power = 1 - \beta$.

- $\beta$ is the probability of a false negative (failing to detect a true effect).

- A lower $\beta$ leads to a higher power.

-

The Trade-Off: Power is inversely affected by the Type I error rate ($\alpha$).

- $\alpha$ is the probability of a false positive (rejecting a true null hypothesis), usually set at 0.05.

- To be more confident in our result (↓ $\alpha$), we must accept a higher chance of missing a true effect (↑ $\beta$), which in turn ↓ Power.

- ↓ $\alpha$ → ↑ $\beta$ → ↓ Power.

⭐ By convention, an acceptable $\beta$ is often set at 0.2, which corresponds to a power of 0.8 (or 80%). This means researchers accept a 20% chance of missing a real effect to achieve 80% power.

Sample Size Formula - Power by the Numbers

A simplified formula shows how different factors influence the required sample size ($n$):

$n \propto \frac{(\sigma^2)(Z_\alpha + Z_\beta)^2}{(\mu_1 - \mu_2)^2}$

- $n$ (Sample Size): Number of subjects needed.

- $\sigma$ (Standard Deviation): Data variability. As $\sigma$ ↑, $n$ ↑.

- $Z_\alpha$ (Significance): Z-score for the chosen $\alpha$ level (e.g., 0.05). As $\alpha$ ↓, $n$ ↑.

- $Z_\beta$ (Power): Z-score for the desired power (1 - $\beta$). As power ↑, $n$ ↑.

- $\mu_1 - \mu_2$ (Effect Size): Magnitude of the difference to be detected. As effect size ↓, $n$ ↑.

⭐ To detect an effect size that is half as large, you must quadruple the sample size.

High‑Yield Points - ⚡ Biggest Takeaways

- Power is the probability of detecting a true effect and avoiding a Type II error (Power = 1 - β).

- ↑ Sample size (n) is the most common way to increase power.

- ↑ Effect size (the magnitude of the difference) makes it easier to find a statistically significant result.

- ↑ Significance level (α) increases power, but also increases the risk of a Type I error.

- ↓ Variability (standard deviation) in the data increases power.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more