The Problem - More Tests, More Lies

- Conducting multiple hypothesis tests on the same data set dramatically inflates the Type I error rate.

- Each test has a pre-set alpha (e.g., $α = \textbf{0.05}$), representing a 5% chance of a false positive.

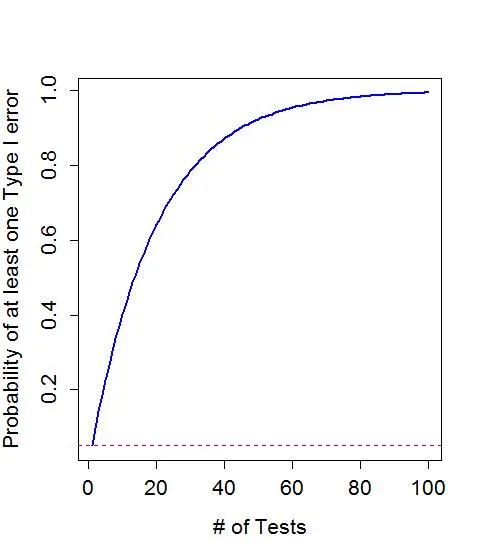

- As the number of comparisons ($k$) increases, the overall probability of making at least one Type I error (the Family-Wise Error Rate or FWER) grows exponentially.

- Formula: FWER = $1 - (1 - α)^k$

- With 1 test: $1 - (1 - 0.05)^1 = \textbf{0.05}$ (5%)

- With 10 tests: $1 - (1 - 0.05)^{10} ≈ \textbf{0.40}$ (40%)

- With 20 tests: $1 - (1 - 0.05)^{20} ≈ \textbf{0.64}$ (64%)

⭐ This is a major reason for "p-hacking" or "data dredging," where researchers run numerous tests until they find a statistically significant result, which is often just a random error. This leads to non-reproducible findings.

The Fix - Bonferroni's Shield

-

Core Idea: A simple, common method to counteract the multiple comparison problem. It adjusts the p-value threshold for significance to prevent an inflated Type I error rate.

-

The Adjustment:

- Divide the desired significance level (α, usually 0.05) by the number of comparisons (n).

- New significance threshold (α') = $α / n$$.

- Alternatively, multiply each individual p-value by n.

-

Decision Rule: A result is only statistically significant if its p-value is less than the adjusted α'.

-

Trade-off:

- ↓ Reduces the chance of Type I errors (false positives).

- ↑ Increases the chance of Type II errors (false negatives) because it's a highly conservative method. You might miss a real effect.

⭐ High-Yield Pearl: The Bonferroni correction is often criticized for being overly conservative, especially with a large number of comparisons. This conservatism directly increases the risk of making a Type II error, failing to detect a true difference when one exists.

Red Flags - When to Use It

The multiple comparison problem arises when a study tests multiple hypotheses simultaneously, inflating the Type I error rate. Suspect it when:

- Multiple Endpoints: Assessing several outcomes (e.g., mortality, hospital stay, pain score) from a single intervention.

- Multiple Groups vs. Control: Comparing several treatment arms (Drug A, B, C) against one control group.

- Subgroup Analyses: Post-hoc searching for effects within specific strata (e.g., age, sex) without pre-planning; a form of "p-hacking."

The family-wise error rate (FWER), the probability of at least one false positive, is $FWER = 1 - (1 - \alpha)^n$, where n is the number of comparisons.

⭐ The Bonferroni correction (dividing $\alpha$ by the number of tests, n) is the simplest fix but is often overly conservative, increasing the risk of Type II errors (false negatives).

High‑Yield Points - ⚡ Biggest Takeaways

- The multiple comparison problem occurs when conducting multiple hypothesis tests simultaneously, which inflates the overall Type I error rate.

- With each test, there's a risk of a false positive; more tests substantially increase the family-wise error rate (FWER).

- The Bonferroni correction is a simple, common solution: divide the desired alpha level (e.g., 0.05) by the number of comparisons.

- This method creates a much stricter p-value threshold for statistical significance.

- While it effectively controls for Type I errors, Bonferroni is conservative and can increase the Type II error rate (i.e., missing a true difference).

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more