Effect Size - Beyond P-Hacking

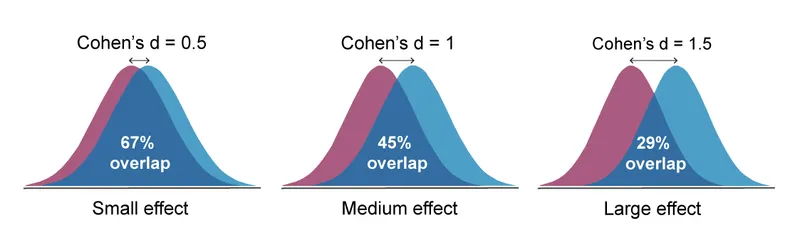

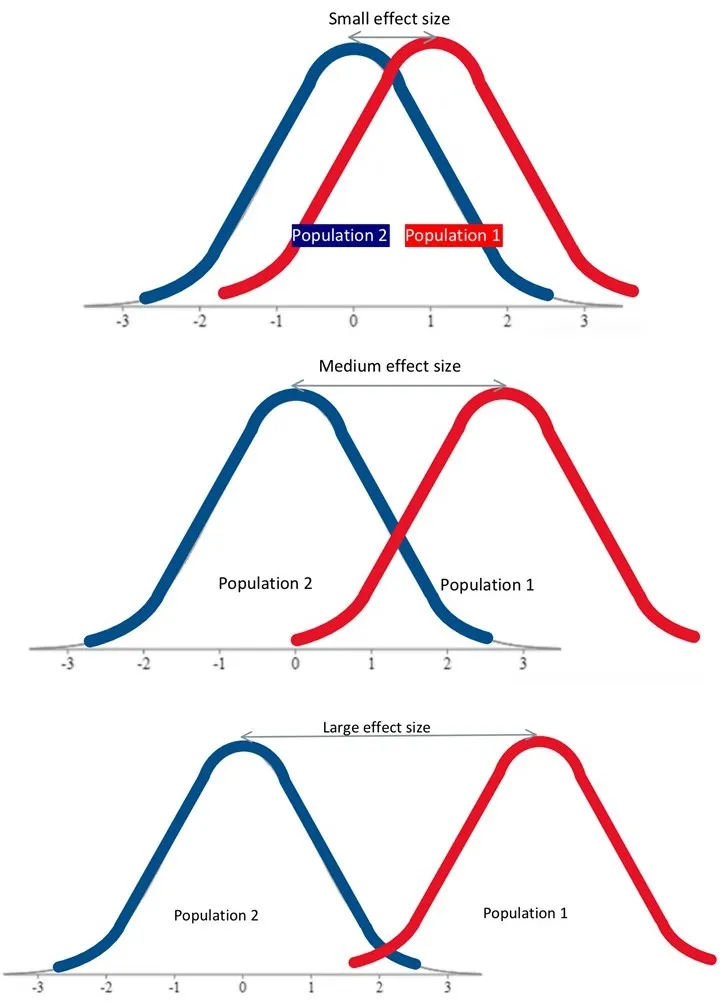

- Quantifies the magnitude of an intervention's effect, moving beyond the simple "significant vs. not significant" of p-values.

- Helps counter p-hacking; a large sample can make a clinically trivial effect statistically significant (p < 0.05), but the effect size remains small.

- Common measures:

- Cohen's d: Standardized difference between means.

- Odds Ratio (OR) / Relative Risk (RR): For categorical outcomes.

- Correlation coefficient (r): For linear relationships.

- The 95% CI of an effect size indicates the precision of the estimate.

⭐ A statistically significant result (p < 0.05) with a small effect size may be clinically meaningless. Always assess both the p-value and the effect size to determine clinical importance.

Confidence Intervals - Range of Reality

- A Confidence Interval (CI) provides a range of plausible values for an unknown population parameter (e.g., true mean difference or odds ratio), based on sample data.

- A 95% CI means that if a study were repeated infinitely, 95% of the calculated CIs would contain the true population value. It is a measure of precision, not statistical significance alone.

- Interpretation:

- Narrow CI → High precision (more certain about the true value).

- Wide CI → Low precision (less certain).

⭐ The width of the confidence interval is inversely related to the sample size. A larger sample size leads to a narrower, more precise CI.

Clinical Significance - Stats vs. Reality

- Statistical significance (p-value) ≠ Clinical significance (real-world importance).

- A p-value < 0.05 simply indicates that an observed effect is unlikely to be due to chance. It does not quantify the size or practical importance of the effect.

- Effect Size: The primary measure of an intervention's impact magnitude, indicating its clinical relevance.

- Confidence Intervals (CIs) are vital for assessing clinical significance.

- A narrow CI implies a precise estimate of the effect.

- Key question: Does the CI range include effects that are clinically trivial? If so, the finding may be unimportant, even if statistically significant.

⭐ A large study might find a new drug lowers blood pressure by 0.1 mmHg (p < 0.001). While statistically significant, this effect size is clinically meaningless. Always scrutinize the confidence interval.

High‑Yield Points - ⚡ Biggest Takeaways

- A 95% Confidence Interval (CI) that excludes the null value (e.g., 0 for mean difference, 1 for OR/RR) implies statistical significance (p < 0.05).

- Narrow CIs indicate high precision and are often the result of larger sample sizes.

- Wider CIs suggest lower precision and may be due to smaller sample sizes.

- CIs provide the range of plausible effect sizes, which a p-value alone does not.

- Always assess if the effect size is clinically meaningful, not just statistically significant.

- Increasing the confidence level (e.g., from 95% to 99%) widens the CI.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more