Common misinterpretations of p-values - The P-Value Fallacy Files

-

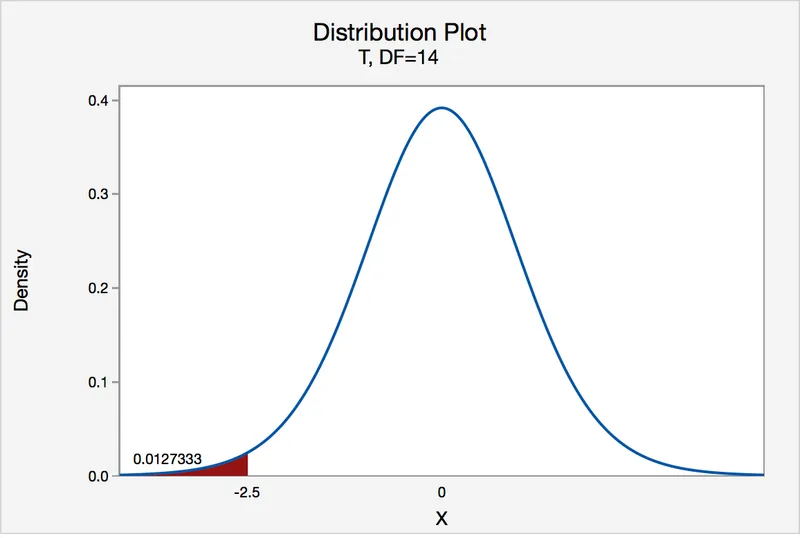

A p-value is the probability of obtaining the observed study results (or more extreme results) assuming the null hypothesis (H₀) is true.

-

Fallacy 1: The p-value is the probability that the null hypothesis is true.

- ⚠️ This is the most common error. The p-value is calculated assuming H₀ is true. It is $P(\text{data} | H₀)$, not $P(H₀ | \text{data})$.

⭐ A p-value of 0.05 does not mean there is a 5% chance the null hypothesis is true. It means there is a 5% chance of observing the data (or more extreme data) if the null hypothesis were true.

-

Fallacy 2: A non-significant p-value (e.g., p > 0.05) means the null hypothesis is true.

- This wrongly equates "no evidence of an effect" with "evidence of no effect."

- The study may be underpowered, leading to a Type II error (false negative).

-

Fallacy 3: A small p-value indicates a large effect size.

- P-values are confounded by sample size. A huge sample can yield a tiny p-value for a trivial, clinically irrelevant effect.

- Always evaluate effect size (e.g., relative risk, odds ratio) and confidence intervals to determine the magnitude of the effect.

- Fallacy 4: Statistical significance equals clinical significance.

- A result can be statistically significant (p < 0.05) but not clinically meaningful.

- Example: A new drug lowers blood pressure by a statistically significant 1 mmHg, which is not a clinically relevant improvement.

Confidence Intervals - The P-Value's Big Brother

- Definition: A Confidence Interval (CI) is a range of values calculated from sample data that is likely to contain the true population parameter (e.g., mean difference, relative risk).

- The 95% CI is standard, implying that if a study were repeated many times, 95% of the calculated CIs would contain the true value.

CI & P-Value Relationship (for α = 0.05):

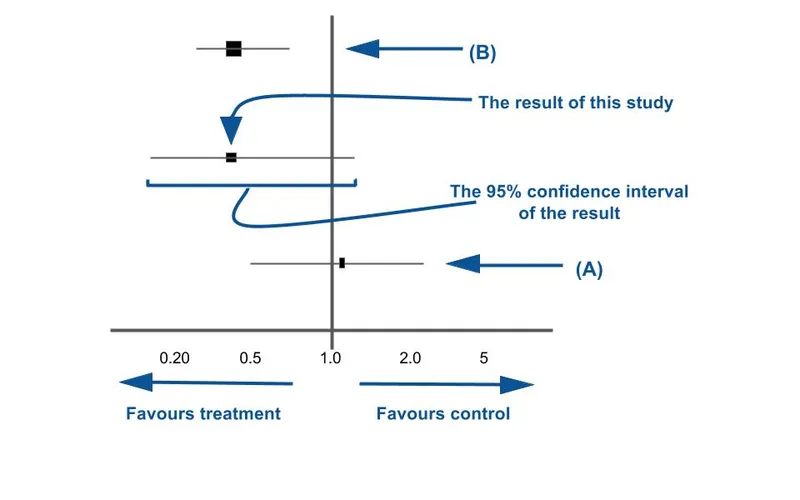

- A CI provides more information than a p-value; it presents a range of plausible values for the true effect.

- Statistical Significance:

- If the 95% CI does not contain the null value, the result is statistically significant (p < 0.05).

- If the 95% CI does contain the null value, the result is not statistically significant (p ≥ 0.05).

- Key Null Values:

- For differences (e.g., mean difference): Null value is 0.

- For ratios (e.g., Odds Ratio [OR], Relative Risk [RR]): Null value is 1.

and another entirely above 1 (significant))

and another entirely above 1 (significant))

Why CIs are Superior:

- Precision: The width of the CI indicates the precision of the point estimate.

- Narrow CI → High precision (less random error).

- Wide CI → Low precision (more random error).

- Effect Size: The CI provides the magnitude and direction of the effect, which a p-value alone cannot do.

⭐ When evaluating a study's OR or RR, check if the 95% CI includes 1. If it does (e.g., CI: 0.8 to 2.1), the association is not statistically significant. If it does not (e.g., CI: 1.5 to 3.0), the association is significant.

- A p-value is NOT the probability that the null hypothesis is true. It is the probability of the observed data (or more extreme) assuming the null hypothesis is true.

- A non-significant p-value does not prove the null hypothesis. It simply reflects insufficient evidence to reject it (absence of evidence ≠ evidence of absence).

- Statistical significance does not imply clinical significance. A tiny p-value can be associated with a clinically trivial effect, especially in large studies.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more