P-Values - Numbers Have Significance

-

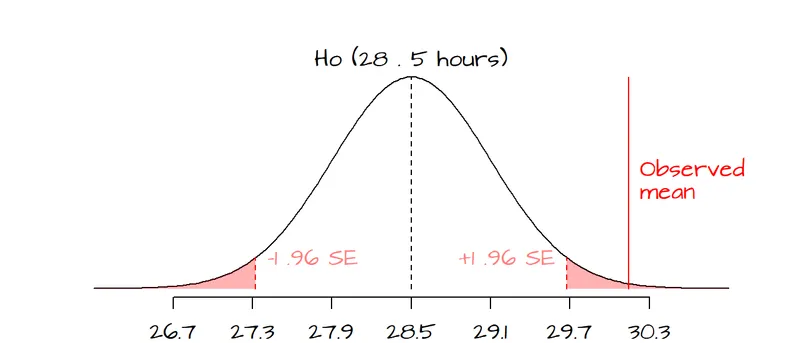

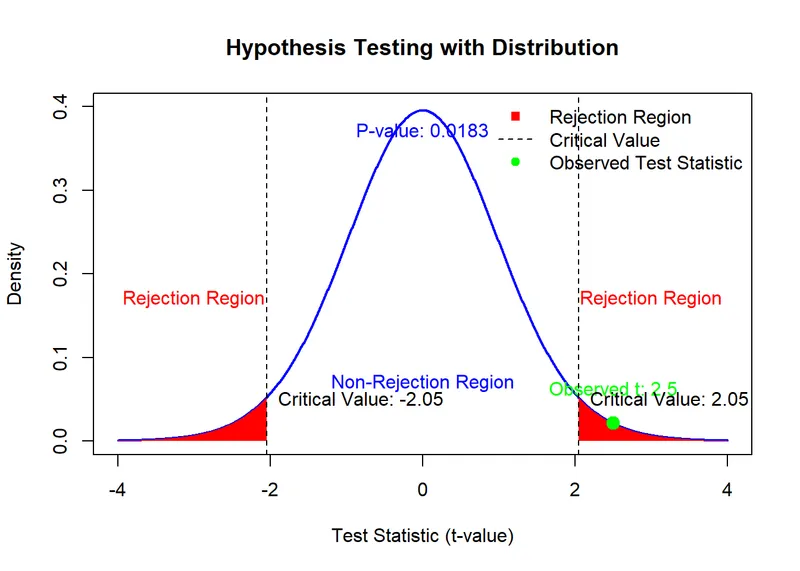

P-value: The probability of observing data as extreme (or more extreme) than the current results, assuming the null hypothesis (H₀) is true.

- Null Hypothesis (H₀): Assumes no difference or effect (e.g., a new drug is no better than a placebo).

- Alternative Hypothesis (H₁): Assumes a difference or effect exists.

-

Significance Threshold (α): Typically set at 0.05.

- If $p < 0.05$, the results are statistically significant.

- This allows for the rejection of the null hypothesis (H₀).

⭐ Statistical significance is not the same as clinical significance. A very large study might find a statistically significant result that is too small to be clinically meaningful (e.g., a drug that lowers blood pressure by only 1 mmHg).

Confidence Intervals - Guessing with Precision

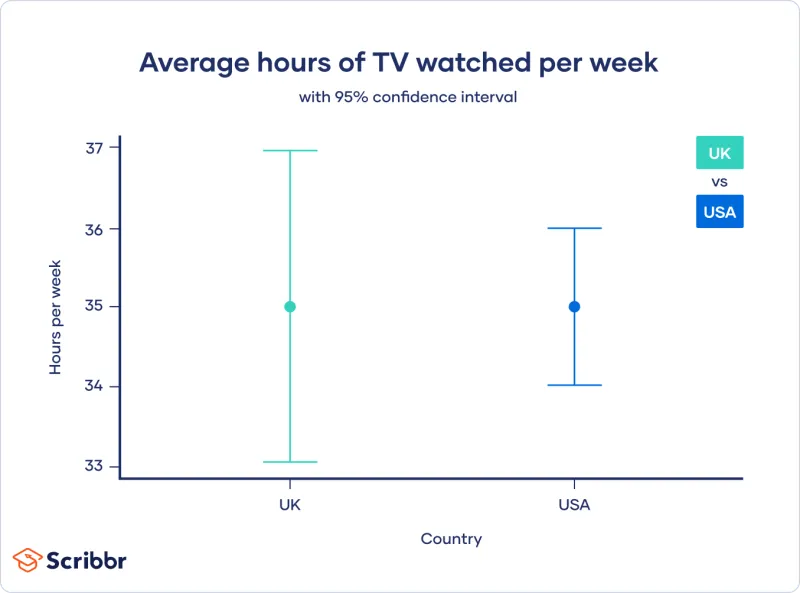

- Confidence Interval (CI): An estimated range of values that is likely to contain the true population parameter. It quantifies the uncertainty around an estimate.

- A 95% CI implies that if a study were repeated many times, 95% of the calculated CIs would contain the true population value.

- Relationship to p-value:

- If the 95% CI for an effect size does NOT cross the null value, the result is statistically significant (p < 0.05).

- Null values: 1 for ratios (Odds Ratio, Relative Risk), 0 for differences (means).

- Precision & CI Width:

- Narrow CI: ↑ precision (less random error).

- Wide CI: ↓ precision (more random error).

⭐ If the CIs for two different groups do not overlap, their difference is statistically significant. However, if they do overlap, the difference may or may not be statistically significant-you must calculate the CI for the difference itself.

Clinical vs. Statistical - Real World vs. Research

- Statistical Significance: Is the observed effect likely due to chance? Governed by the $p$-value.

- Clinical Significance: Is the effect large enough to be meaningful for patients and change clinical practice? Governed by effect size.

| Feature | Statistical Significance | Clinical Significance |

|---|---|---|

| Question Answered | Is the effect real (not by chance)? | Does the effect matter in practice? |

| Key Metric | $p$-value | Effect size (e.g., NNT, odds ratio) |

| Key Influencer | Sample size | Magnitude of benefit, patient values |

| Interpretation | If p < 0.05, result is unlikely random | A small effect may be statistically significant but clinically irrelevant |

- Statistical significance (p < 0.05) just means a finding is unlikely to be due to chance; it does not automatically imply clinical importance.

- Clinical significance is the practical relevance of a treatment effect-whether it makes a real difference to patients.

- Very large sample sizes can make tiny, clinically irrelevant effects statistically significant.

- Conversely, a small study may be underpowered and fail to find statistical significance for a clinically important effect.

- Evaluate the effect size (e.g., relative risk) and the confidence interval to gauge clinical relevance.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more