Validation and Performance Assessment - Validating AI's Vision

- Why Validate?: Ensures AI safety for patients, clinical efficacy for improved outcomes, and is vital for regulatory approval.

- Key Stages:

- Internal Validation: Assesses model robustness on subsets of the original development data.

- External Validation: Tests generalizability on new, independent datasets.

- Temporal (different times)

- Geographic (different locations)

- Domain Shift (different populations/equipment)

- Assessment Levels (Van Calster):

- Model Performance (technical accuracy)

- Clinical Utility (impact on patient care & outcomes)

- Societal Impact (e.g., cost-effectiveness, equity)

⭐ External validation on diverse, unseen datasets is crucial to assess true generalizability and prevent overfitting.

Validation and Performance Assessment - Metrics That Matter

Key metrics for assessing AI classification model performance and reliability:

Classification Metrics

| Metric | Formula | Description |

|---|---|---|

| Sensitivity (Recall, Se) | $Se = TP / (TP + FN)## Validation and Performance Assessment - Metrics That Matter |

Key metrics for assessing AI classification model performance and reliability:

Classification Metrics

| True Positive Rate (detects disease). |

| Specificity (Sp) | $Sp = TN / (TN + FP)## Validation and Performance Assessment - Metrics That Matter

Key metrics for assessing AI classification model performance and reliability:

Classification Metrics

| True Negative Rate (rules out disease). |

| PPV (Precision) | $PPV = TP / (TP + FP)## Validation and Performance Assessment - Metrics That Matter

Key metrics for assessing AI classification model performance and reliability:

Classification Metrics

| Positive Predictive Value. |

| NPV | $NPV = TN / (TN + FN)## Validation and Performance Assessment - Metrics That Matter

Key metrics for assessing AI classification model performance and reliability:

Classification Metrics

| Negative Predictive Value. |

| Accuracy (Acc) | $Acc = (TP + TN) / (TP + TN + FP + FN)## Validation and Performance Assessment - Metrics That Matter

Key metrics for assessing AI classification model performance and reliability:

Classification Metrics

| Overall model correctness. |

| F1-score | $F1 = 2 * (Precision * Recall) / (Precision + Recall)## Validation and Performance Assessment - Metrics That Matter

Key metrics for assessing AI classification model performance and reliability:

Classification Metrics

| Balances Precision (PPV) & Recall (Se). |Other Key Measures

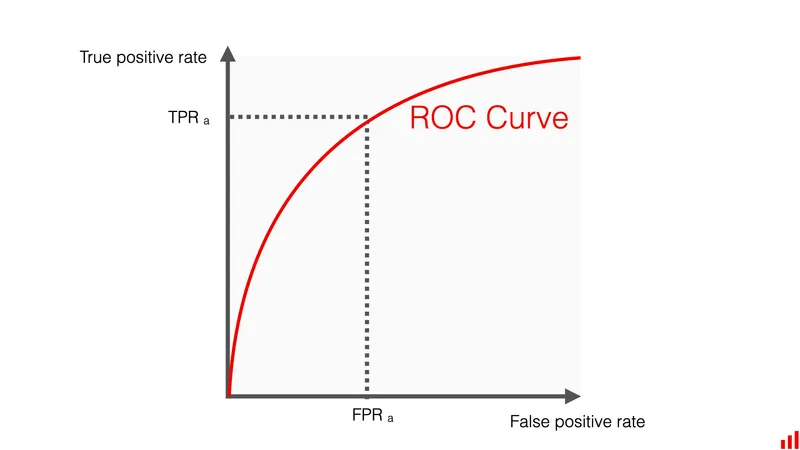

- AUC-ROC (Area Under Receiver Operating Characteristic curve): Evaluates discrimination across various diagnostic thresholds.

- AUC-PRC (Area Under Precision-Recall curve): Particularly useful for imbalanced datasets, focusing on positive class.

- Calibration: Assesses the agreement between predicted probabilities and actual observed event frequencies.

⭐ AUC-ROC is a widely used metric to evaluate the discriminative ability of a classification model across various thresholds, independent of prevalence.

Validation and Performance Assessment - Sets & Strategies

- Dataset Types:

- Training Set: Model building.

- Validation (Tuning) Set: Hyperparameter tuning, overfitting prevention.

- Test Set: Final, unbiased evaluation on unseen data.

- Independent Test Sets: Crucial for generalizability; ideally from different populations/sources.

- Data Splitting Strategies:

- Random: Simple split.

- Stratified: Preserves subgroup ratios (e.g., disease prevalence).

- Temporal: Train on old, test on new data; checks performance drift.

- Site-based: Data from different sites/scanners; tests generalizability.

- Validation Approaches:

- Internal Validation: Uses original dataset subsets (e.g., cross-validation).

- External Validation: Gold standard; uses new, independent datasets. Assesses real-world utility.

- Study Designs:

- Retrospective: Uses historical data.

- Prospective: Collects new data post-model development.

⭐ Prospective validation studies, though challenging, provide the highest level of evidence for an AI model's real-world clinical performance.

Validation and Performance Assessment - Bias Busters & Fair AI

- Key Challenges & Solutions:

- Sources of Bias: Crucial to identify for reliable AI.

- Selection Bias: Non-representative training data (e.g., specific demographics).

- Spectrum Bias: Imbalance in disease severity or types in data.

- Annotation Bias: Inaccurate or inconsistent data labels by experts.

- Measurement Bias: Systematic errors during data collection/processing.

- Generalizability: Model's ability to perform accurately on new, diverse datasets beyond the initial training set. Essential for real-world clinical utility.

- Overfitting/Underfitting: Balancing model complexity.

- Overfitting: Model learns training data too well (including noise); performs poorly on unseen test data.

- Underfitting: Model too simple; fails to capture underlying patterns, performing poorly on both training and test data.

- Ethical Considerations: Prioritizing fairness (avoiding bias against subgroups), accountability, and transparency in AI development and deployment.

- Sources of Bias: Crucial to identify for reliable AI.

- Reporting Guidelines: Promote transparency, reproducibility, and critical appraisal of AI studies.

- CONSORT-AI (CONsolidated Standards of Reporting Trials - Artificial Intelligence)

- STROBE-AI (STrengthening the Reporting of OBservational studies in Epidemiology - Artificial Intelligence)

- TRIPOD-AI (Transparent Reporting of a multivariable prediction model for Individual Prognosis Or Diagnosis - Artificial Intelligence)

⭐ Adherence to reporting guidelines like CONSORT-AI and STROBE-AI is essential for transparency, reproducibility, and critical appraisal of AI validation studies.

High‑Yield Points - ⚡ Biggest Takeaways

- External validation on new, unseen data is critical, beyond internal validation, to truly assess generalizability.

- Key performance metrics include Sensitivity, Specificity, PPV, NPV, and especially the AUC-ROC (Area Under the ROC Curve).

- AUC-ROC provides a single summary measure of an AI model's overall diagnostic accuracy.

- Beware of overfitting: models performing well on training data but poorly on new, independent test data.

- AI models can inherit and amplify biases from training datasets; diverse data is crucial.

- The quality of the ground truth (reference standard) is paramount for reliable AI performance assessment and validation.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more