Intro to Multivariate - Unmasking Complexity

- Definition: Statistical techniques simultaneously analyzing ≥3 variables (one outcome, multiple predictors).

- Core Aim: To understand complex interrelationships in health data where multiple factors interact.

- Identifies independent effects of variables.

- Controls for confounding, reducing bias.

- Contrasts With:

- Univariate analysis: Describes a single variable (e.g., mean age).

- Bivariate analysis: Examines relationship between two variables (e.g., smoking and lung cancer).

- Variables Involved:

- Dependent Variable (DV): The main outcome or event being studied (e.g., disease status).

- Independent Variables (IVs): Factors hypothesized to influence the DV (e.g., age, diet, exposure).

⭐ Multivariate analysis is crucial for controlling confounding variables, offering a clearer understanding of true associations in medical research.

pointing to a central point (dependent variable) with some arrows interacting)

- Essential for robust conclusions in clinical studies and public health interventions.

Regression Models - Predicting & Explaining

- Regression analysis: Models relationship between a dependent variable (outcome) and ≥1 independent variables (predictors).

- Goals:

- Prediction: Estimate outcome value.

- Explanation: Quantify association strength & direction, adjusting for confounders.

- "Multiple": Indicates >1 independent variable.

| Feature | Multiple Linear Regression | Multiple Logistic Regression |

|---|---|---|

| Outcome (Y) | Continuous (e.g., BP, blood sugar) | Binary/Dichotomous (e.g., disease yes/no) |

| Equation | $Y = \beta_0 + \beta_1X_1 + \dots + \beta_kX_k$ | $\text{logit}(P) = \beta_0 + \beta_1X_1 + \dots + \beta_kX_k$ where $P = \text{Prob(event)}$; $\text{logit}(P) = \ln(\frac{P}{1-P})$ |

| Coefficients ($\beta$) | Change in Y for one unit change in X | Log-odds of event for one unit change in X |

| Interpretation | Direct effect on Y's value | $e^\beta$ = Adjusted Odds Ratio (AOR) |

| Use Case | Predicting continuous value | Predicting event probability, AORs |

Structuring Data - Patterns & Groups

- Reveals data structure, reduces dimensions, groups similar items.

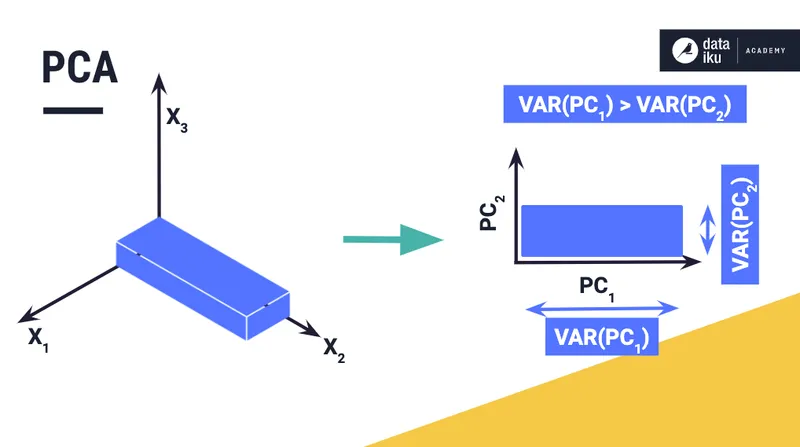

- Principal Component Analysis (PCA):

- Reduces dimensions, retains maximal variance.

- Creates new, uncorrelated principal components.

- Assumes no underlying latent variables.

⭐ Principal Component Analysis (PCA) is primarily used for dimensionality reduction by creating new, uncorrelated variables (principal components) that capture the maximum variance in the data.

- Factor Analysis (FA):

- Identifies latent factors from observed variables.

- Explains correlations; assumes factors cause observed variables.

- Cluster Analysis:

- Groups similar items into distinct clusters.

- Unsupervised; no prior group knowledge.

- E.g., K-means, Hierarchical clustering.

- PCA vs. Factor Analysis - Key Distinctions:

- PCA: Dimensionality reduction; explains total variance. Components are math combinations.

- FA: Identifies latent structure; explains common variance. Factors are hypothetical_constructs_ (corrected from 'hypothetical' for clarity, word count still okay).

High‑Yield Points - ⚡ Biggest Takeaways

- Multivariate analysis examines >2 variables simultaneously.

- Logistic regression predicts binary outcomes (e.g., disease Y/N) and yields Odds Ratios.

- Multiple linear regression predicts continuous outcomes from multiple predictors.

- ANOVA compares means of >2 groups; MANOVA for multiple dependent variables.

- Cox Proportional Hazards model analyzes time-to-event data (survival), yielding Hazard Ratios.

- Factor analysis & PCA are key for data reduction and identifying underlying structures.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more