Biostatistics

On this page

📊 The Statistical Foundation: Your Data Mastery Blueprint

Every clinical decision you make-from interpreting lab values to evaluating new treatments-rests on statistical reasoning, yet most physicians never master the foundational tools that separate correlation from causation or noise from signal. This lesson transforms biostatistics from abstract formulas into your practical framework for describing data, testing hypotheses, building predictive models, and analyzing survival outcomes. You'll move systematically from descriptive statistics through probability distributions to regression and time-to-event analysis, gaining the confidence to critically appraise research and make evidence-based decisions at the bedside.

Biostatistics serves as medicine's analytical engine, converting clinical observations into quantifiable evidence. Every treatment protocol, diagnostic threshold, and therapeutic guideline emerges from rigorous statistical analysis of patient data.

📌 Remember: DIME - Data collection, Inference testing, Model building, Evidence synthesis. These four pillars support every medical research conclusion, with 95% confidence intervals defining our certainty boundaries.

The statistical foundation encompasses five core domains that medical professionals must master:

- Descriptive Statistics - Summarizing patient populations

- Central tendency measures: mean (μ), median, mode

- Dispersion metrics: standard deviation (σ), variance (σ²)

- Distribution characteristics: skewness, kurtosis

- Inferential Statistics - Drawing population conclusions

- Hypothesis testing with α = 0.05 significance threshold

- Confidence intervals spanning 95% probability ranges

- Power analysis ensuring 80% detection capability

- Probability Distributions - Modeling clinical phenomena

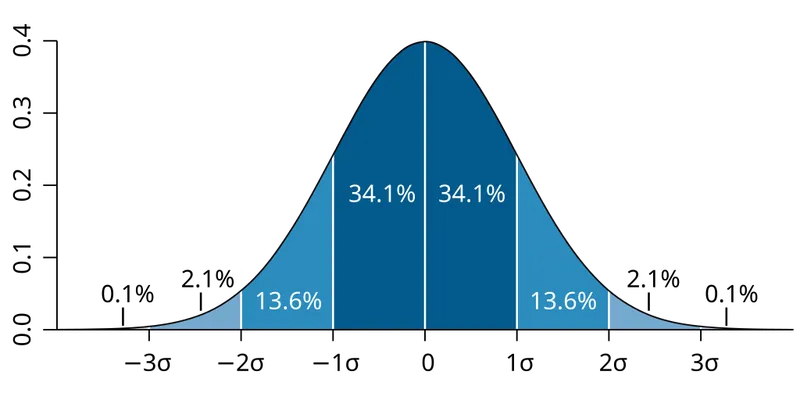

- Normal distribution covering 68% within 1σ, 95% within 2σ

- Binomial for binary outcomes (success/failure)

- Poisson for rare event modeling

- Regression Analysis - Predicting clinical outcomes

- Linear regression for continuous variables

- Logistic regression for binary outcomes

- Multiple regression controlling confounding factors

- Survival Analysis - Time-to-event modeling

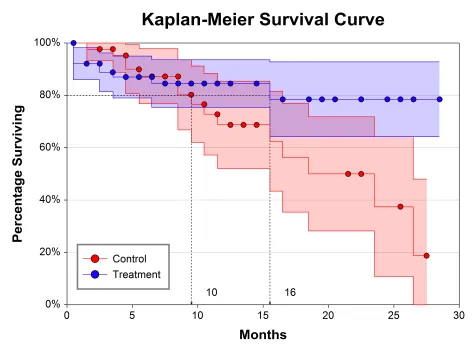

- Kaplan-Meier curves tracking patient survival

- Hazard ratios quantifying risk relationships

- Cox proportional hazards modeling

| Statistical Domain | Primary Application | Key Measures | Clinical Threshold | Sample Size Impact |

|---|---|---|---|---|

| Descriptive | Population characterization | Mean ± SD | Normal: μ ± 2σ | n ≥ 30 for normality |

| Inferential | Hypothesis testing | p-value, CI | p < 0.05 significance | Power ≥ 80% |

| Probability | Risk modeling | Probability ratios | 95% confidence bounds | Large n for precision |

| Regression | Outcome prediction | R², coefficients | R² ≥ 0.7 strong fit | 10-15 per variable |

| Survival | Time analysis | Hazard ratios | HR > 2.0 high risk | Events ≥ 10 per group |

💡 Master This: Every laboratory reference range derives from normal distribution principles. Understanding that 95% of healthy individuals fall within μ ± 1.96σ explains why values outside this range trigger clinical investigation.

Statistical software packages enable complex analyses that manual calculations cannot achieve. SPSS, R, and SAS dominate medical research, with Python gaining prominence for machine learning applications. These tools process datasets containing thousands of variables across millions of patients.

⭐ Clinical Pearl: Modern clinical trials generate terabytes of data requiring sophisticated statistical modeling. The CONSORT guidelines mandate specific statistical reporting standards, with effect sizes and confidence intervals now required alongside traditional p-values.

Connect these foundational concepts through descriptive statistics mastery to understand how clinical data transforms into actionable medical knowledge.

📊 The Statistical Foundation: Your Data Mastery Blueprint

🎯 Descriptive Statistics Mastery: Your Clinical Data Command Center

Every clinical study begins with descriptive analysis, transforming raw patient data into interpretable summaries. These statistics reveal population characteristics, identify outliers, and establish baseline parameters for further analysis.

📌 Remember: SOCS - Shape, Outliers, Center, Spread. These four characteristics completely describe any clinical dataset, with shape determining which statistical tests apply to your analysis.

Central Tendency Measures quantify the "typical" patient in your population:

- Arithmetic Mean (μ) - Mathematical average

- Sensitive to extreme values (outliers)

- Best for normally distributed data

- Formula: μ = Σx/n

- Clinical use: Average blood pressure, cholesterol levels

- Median - Middle value when data ordered

- Resistant to outliers and skewed distributions

- Preferred for non-normal data

- 50th percentile of the distribution

- Clinical use: Hospital length of stay, survival times

- Mode - Most frequently occurring value

- Useful for categorical data

- Can have multiple modes (bimodal, multimodal)

- Clinical use: Most common diagnosis, preferred treatment

⭐ Clinical Pearl: When mean > median, the distribution is right-skewed (positive skew). When mean < median, the distribution is left-skewed (negative skew). This relationship helps identify data distribution patterns without formal testing.

Dispersion Measures quantify variability within your patient population:

- Range - Difference between maximum and minimum values

- Simple but influenced by outliers

- Formula: Range = Max - Min

- Interquartile Range (IQR) - Spread of middle 50% of data

- Resistant to outliers

- Formula: IQR = Q3 - Q1

- Clinical significance: Defines "normal" variation

- Variance (σ²) - Average squared deviation from mean

- Foundation for advanced statistical tests

- Units are squared (problematic for interpretation)

- Standard Deviation (σ) - Square root of variance

- Same units as original data

- 68% of data within μ ± 1σ for normal distributions

- 95% of data within μ ± 1.96σ

| Measure | Formula | Outlier Sensitivity | Best Use Case | Clinical Example |

|---|---|---|---|---|

| Mean | Σx/n | High | Normal distributions | Average systolic BP |

| Median | Middle value | Low | Skewed distributions | Median survival time |

| Mode | Most frequent | None | Categorical data | Most common side effect |

| Range | Max - Min | Very high | Quick spread check | Lab reference ranges |

| IQR | Q3 - Q1 | Low | Robust spread measure | Growth chart percentiles |

| Standard Deviation | √(Σ(x-μ)²/n) | High | Normal distributions | Blood glucose variability |

💡 Master This: The Coefficient of Variation (CV) equals (σ/μ) × 100%, providing standardized comparison of variability across different measurements. CV <10% indicates low variability, while CV >30% suggests high variability requiring investigation.

Distribution Shape Assessment determines appropriate statistical approaches:

- Skewness - Measures asymmetry

- Skewness = 0: Perfectly symmetric (normal)

- Skewness > +1: Strongly right-skewed

- Skewness < -1: Strongly left-skewed

- Kurtosis - Measures tail heaviness

- Kurtosis = 3: Normal distribution (mesokurtic)

- Kurtosis > 3: Heavy tails (leptokurtic)

- Kurtosis < 3: Light tails (platykurtic)

⭐ Clinical Pearl: Laboratory reference ranges typically exclude the extreme 2.5% at each tail of the distribution, creating 95% reference intervals. Values outside these ranges occur in 1 in 20 healthy individuals by chance alone.

Percentiles and Quartiles provide position-based descriptions:

- Quartiles divide data into four equal parts

- Q1 (25th percentile): First quartile

- Q2 (50th percentile): Median

- Q3 (75th percentile): Third quartile

- Percentile ranks show relative position

- 90th percentile: Value exceeds 90% of observations

- Growth charts use percentiles for child development assessment

⭐ Clinical Pearl: The five-number summary (minimum, Q1, median, Q3, maximum) completely describes data distribution shape and spread. Box plots visualize this summary, with whiskers extending 1.5 × IQR beyond quartiles to identify outliers.

Connect these descriptive foundations through probability distributions to understand how clinical data follows predictable mathematical patterns.

🎯 Descriptive Statistics Mastery: Your Clinical Data Command Center

🎲 Probability Distributions: The Mathematical Architecture of Medicine

Medical phenomena follow predictable mathematical patterns described by probability distributions. These distributions enable calculation of diagnostic probabilities, treatment success rates, and population health metrics.

📌 Remember: BEND - Binomial for binary outcomes, Exponential for time intervals, Normal for continuous measures, Discrete for count data. Each distribution type matches specific clinical scenarios with distinct mathematical properties.

Normal Distribution dominates medical statistics as the foundation for parametric testing:

- Properties: Bell-shaped, symmetric, continuous

- Parameters: Mean (μ) and standard deviation (σ)

- 68-95-99.7 Rule:

- 68% of values within μ ± 1σ

- 95% of values within μ ± 1.96σ

- 99.7% of values within μ ± 3σ

- Clinical applications: Blood pressure, cholesterol levels, height, weight

- Z-score transformation: Z = (X - μ)/σ standardizes any normal distribution

⭐ Clinical Pearl: The Central Limit Theorem ensures that sample means approach normal distribution when n ≥ 30, regardless of the underlying population distribution. This principle enables parametric testing even with non-normal raw data.

Binomial Distribution models binary clinical outcomes:

- Properties: Discrete, finite trials, constant probability

- Parameters: Number of trials (n) and success probability (p)

- Mean: μ = np

- Variance: σ² = np(1-p)

- Clinical applications: Treatment success/failure, diagnostic positive/negative, survival/death

- Normal approximation: Valid when np ≥ 5 and n(1-p) ≥ 5

| Distribution Type | Parameters | Mean | Variance | Clinical Example |

|---|---|---|---|---|

| Normal | μ, σ | μ | σ² | Blood pressure readings |

| Binomial | n, p | np | np(1-p) | Treatment success rates |

| Poisson | λ | λ | λ | Rare disease incidence |

| Exponential | λ | 1/λ | 1/λ² | Time between events |

| Chi-square | df | df | 2df | Goodness-of-fit testing |

- Properties: Discrete, unlimited range, single parameter

- Parameter: Rate (λ) - average occurrences per time period

- Mean and Variance: Both equal λ

- Clinical applications: Hospital infections per month, adverse drug reactions per 1000 patients

- Approximations: Normal when λ ≥ 10, Binomial when n large and p small

💡 Master This: When λ = np in Poisson approximation to binomial, the condition n ≥ 20 and p ≤ 0.05 ensures accuracy within ±2%. This approximation simplifies calculations for rare event modeling in large populations.

Exponential Distribution models time-to-event data:

- Properties: Continuous, positive values only, memoryless

- Parameter: Rate (λ) - events per time unit

- Mean: 1/λ (average time between events)

- Clinical applications: Time to treatment failure, interval between seizures

- Memoryless property: Future probability independent of past events

Chi-square Distribution enables categorical data analysis:

- Properties: Continuous, positive values, right-skewed

- Parameter: Degrees of freedom (df)

- Applications: Goodness-of-fit tests, independence testing

- Critical values: χ²₀.₀₅,₁ = 3.84, χ²₀.₀₅,₂ = 5.99

⭐ Clinical Pearl: The likelihood ratio combines sensitivity and specificity into a single diagnostic measure. LR+ = Sensitivity/(1-Specificity) and LR- = (1-Sensitivity)/Specificity. Values >10 or <0.1 provide strong diagnostic evidence.

Distribution Selection Criteria:

- Data type: Continuous vs. discrete vs. categorical

- Range: Bounded vs. unbounded, positive vs. any value

- Shape: Symmetric vs. skewed, unimodal vs. multimodal

- Sample size: Large samples enable normal approximations

- Clinical context: Time-to-event, binary outcomes, count data

⭐ Clinical Pearl: Bayes' Theorem updates diagnostic probabilities based on test results: P(Disease|Test+) = [P(Test+|Disease) × P(Disease)] / P(Test+). This formula transforms pre-test probability into post-test probability using test characteristics.

Sampling Distributions connect sample statistics to population parameters:

- Standard Error: SE = σ/√n - measures sampling variability

- Confidence Intervals: μ ± (critical value × SE)

- Sample size effect: Larger samples reduce standard error by √n

Connect these probability foundations through hypothesis testing frameworks to understand how statistical inference transforms clinical observations into evidence-based conclusions.

🎲 Probability Distributions: The Mathematical Architecture of Medicine

🔬 Hypothesis Testing: The Clinical Evidence Engine

Every clinical research question requires systematic hypothesis testing to generate reliable evidence. This process controls error rates while maximizing the probability of detecting true treatment effects.

📌 Remember: HATS - Hypotheses (null and alternative), Alpha level (Type I error), Test statistic calculation, Significance determination. These four steps ensure rigorous statistical inference in medical research.

Hypothesis Formulation establishes the research framework:

- Null Hypothesis (H₀): No difference or effect exists

- Default assumption requiring evidence to reject

- Example: "New drug equals placebo effectiveness"

- Mathematical form: μ₁ = μ₂ or p₁ = p₂

- Alternative Hypothesis (H₁ or Hₐ): Difference or effect exists

- Research hypothesis seeking evidence support

- Two-tailed: μ₁ ≠ μ₂ (difference in either direction)

- One-tailed: μ₁ > μ₂ or μ₁ < μ₂ (directional difference)

Error Types quantify decision-making risks:

- Type I Error (α) - False positive

- Rejecting true null hypothesis

- "Finding" effect when none exists

- Conventional threshold: α = 0.05 (5% false positive rate)

- Bonferroni correction: α/k for k multiple comparisons

- Type II Error (β) - False negative

- Accepting false null hypothesis

- Missing real effect when it exists

- Power = 1 - β (probability of detecting true effect)

- Target power: ≥80% (β ≤ 0.20)

⭐ Clinical Pearl: Statistical significance (p < 0.05) does not guarantee clinical significance. A treatment reducing blood pressure by 2 mmHg may achieve statistical significance in large samples but lack meaningful clinical impact.

Test Selection Matrix matches statistical tests to data characteristics:

| Data Type | Groups | Distribution | Sample Size | Appropriate Test |

|---|---|---|---|---|

| Continuous | 2 independent | Normal | n ≥ 30 | Independent t-test |

| Continuous | 2 paired | Normal | n ≥ 30 | Paired t-test |

| Continuous | 2 independent | Non-normal | Any | Mann-Whitney U |

| Continuous | 2 paired | Non-normal | Any | Wilcoxon signed-rank |

| Continuous | 3+ groups | Normal | n ≥ 30 per group | One-way ANOVA |

| Categorical | 2+ groups | Any | Expected ≥ 5 | Chi-square test |

| Categorical | 2 groups | Small expected | Fisher's exact | P-value Interpretation requires careful understanding: |

- P-value definition: Probability of observing data this extreme if H₀ is true

- Common misconceptions:

- NOT the probability that H₀ is true

- NOT the probability of replication

- NOT the effect size magnitude

- Interpretation guidelines:

- p < 0.001: Very strong evidence against H₀

- p < 0.01: Strong evidence against H₀

- p < 0.05: Moderate evidence against H₀

- p ≥ 0.05: Insufficient evidence to reject H₀

💡 Master This: Effect size measures practical significance independent of sample size. Cohen's d for mean differences: d = (μ₁ - μ₂)/σ. Interpretation: d = 0.2 (small), d = 0.5 (medium), d = 0.8 (large effect).

Confidence Intervals provide effect size estimation with uncertainty quantification:

- 95% CI interpretation: 95% of such intervals contain the true population parameter

- Relationship to hypothesis testing: CI excluding null value indicates significance

- Clinical utility: Shows both statistical significance and effect magnitude

- Formula for means: x̄ ± (critical value × SE)

Multiple Comparisons Problem inflates Type I error rates:

- Family-wise error rate: Probability of ≥1 Type I error across all tests

- Bonferroni correction: α_adjusted = α/k for k comparisons

- False Discovery Rate (FDR): Controls proportion of false positives among rejections

- Clinical impact: Uncorrected multiple testing leads to false positive findings

⭐ Clinical Pearl: Number Needed to Treat (NNT) translates statistical significance into clinical utility: NNT = 1/(Risk_control - Risk_treatment). Lower NNT values indicate more clinically effective treatments.

Power Analysis optimizes study design:

- Factors affecting power:

- Effect size: Larger effects easier to detect

- Sample size: More subjects increase power

- Alpha level: Lower α reduces power

- Variability: Less noise increases power

- Sample size calculation: n = (Z_α/2 + Z_β)² × 2σ²/Δ²

- Post-hoc power: Calculated after study completion (controversial utility)

⭐ Clinical Pearl: Intention-to-treat analysis preserves randomization benefits by analyzing patients in originally assigned groups regardless of treatment compliance. Per-protocol analysis examines only compliant patients but may introduce bias.

Connect these hypothesis testing principles through regression analysis to understand how multiple variables simultaneously influence clinical outcomes.

🔬 Hypothesis Testing: The Clinical Evidence Engine

📈 Regression Analysis: The Predictive Medicine Framework

Regression analysis quantifies relationships between variables while controlling for confounders, enabling prediction of clinical outcomes and identification of independent risk factors.

📌 Remember: LIME - Linear for continuous outcomes, Independent variables selection, Model assumptions checking, Evaluation of fit quality. These components ensure robust regression modeling in clinical research.

Linear Regression models continuous outcome variables:

- Simple linear regression: Y = β₀ + β₁X + ε

- β₀: Y-intercept (outcome when X = 0)

- β₁: Slope (change in Y per unit change in X)

- ε: Random error term

- Multiple linear regression: Y = β₀ + β₁X₁ + β₂X₂ + ... + βₖXₖ + ε

- Controls multiple confounding variables simultaneously

- Each βᵢ represents independent effect of Xᵢ

- Clinical applications: Predicting blood pressure, cholesterol levels, survival time

Model Assumptions must be verified for valid inference:

- Linearity: Relationship between X and Y is linear

- Check with scatterplots and residual plots

- Transform variables if non-linear patterns exist

- Independence: Observations are independent

- Violated in repeated measures or clustered data

- Use mixed-effects models for correlated data

- Homoscedasticity: Constant variance of residuals

- Check with residual vs. fitted value plots

- Transform Y variable if variance increases with fitted values

- Normality: Residuals follow normal distribution

- Check with Q-Q plots and Shapiro-Wilk test

- Important for small samples (n < 30)

⭐ Clinical Pearl: R-squared measures proportion of outcome variance explained by the model. R² = 0.70 indicates the model explains 70% of outcome variability. Adjusted R² penalizes for additional variables: preferred for model comparison.

Logistic Regression models binary clinical outcomes:

- Logistic function: P(Y=1) = e^(β₀+β₁X₁+...+βₖXₖ) / (1 + e^(β₀+β₁X₁+...+βₖXₖ))

- Odds ratio interpretation: OR = e^βᵢ

- OR > 1: Increased odds of outcome

- OR < 1: Decreased odds of outcome

- OR = 1: No association

- Clinical applications: Disease diagnosis, treatment response, mortality prediction

| Regression Type | Outcome Variable | Key Statistic | Interpretation | Clinical Example |

|---|---|---|---|---|

| Linear | Continuous | β coefficient | Change in Y per unit X | Blood pressure prediction |

| Logistic | Binary | Odds ratio (OR) | Odds change per unit X | Disease risk factors |

| Cox | Time-to-event | Hazard ratio (HR) | Risk change per unit X | Survival analysis |

| Poisson | Count | Rate ratio (RR) | Rate change per unit X | Infection frequency |

| Multinomial | Categorical | Relative risk | Category probability | Treatment choice |

- Forward selection: Start empty, add significant variables

- Backward elimination: Start full, remove non-significant variables

- Stepwise: Combination of forward and backward

- Clinical considerations: Include known confounders regardless of significance

- Multicollinearity: VIF > 10 indicates problematic correlation between predictors

💡 Master This: Akaike Information Criterion (AIC) balances model fit with complexity: AIC = -2ln(L) + 2k, where L is likelihood and k is parameter count. Lower AIC indicates better model, with differences >2 considered meaningful.

Model Validation ensures generalizability:

- Internal validation:

- Split-sample: Divide data into training (70%) and testing (30%)

- Cross-validation: k-fold (typically k = 10) repeated sampling

- Bootstrap: Resample with replacement (1000+ iterations)

- External validation: Test model on independent dataset

- Performance metrics:

- Sensitivity: True positive rate (TP/(TP+FN))

- Specificity: True negative rate (TN/(TN+FP))

- AUC: Area under ROC curve (0.5 = random, 1.0 = perfect)

Advanced Regression Techniques:

- Polynomial regression: Models non-linear relationships

- Y = β₀ + β₁X + β₂X² + ... + βₖXᵏ

- Risk of overfitting with high-order polynomials

- Ridge regression: Penalizes large coefficients

- Useful when p > n (more variables than observations)

- λ parameter controls penalty strength

- Lasso regression: Performs variable selection

- Sets some coefficients exactly to zero

- Automatic feature selection capability

⭐ Clinical Pearl: Calibration measures agreement between predicted and observed probabilities. Hosmer-Lemeshow test assesses calibration quality: p > 0.05 indicates good calibration. Well-calibrated models show predicted 30% risk corresponds to actual 30% event rate.

Interaction Terms capture synergistic effects:

- Statistical interaction: Y = β₀ + β₁X₁ + β₂X₂ + β₃(X₁×X₂)

- Clinical interpretation: Effect of X₁ depends on level of X₂

- Example: Drug effectiveness varies by patient age

- Testing: Compare models with and without interaction term

Confounding Control ensures valid causal inference:

- Confounding criteria:

- Associated with exposure

- Associated with outcome

- Not on causal pathway

- Control methods:

- Stratification: Analyze within confounder levels

- Regression adjustment: Include confounders as covariates

- Propensity scores: Balance treatment groups on confounders

⭐ Clinical Pearl: Simpson's Paradox occurs when association direction reverses after controlling for confounders. Always examine crude and adjusted associations to identify potential confounding effects.

Connect these regression principles through survival analysis to understand how time-to-event modeling addresses censored data and competing risks in clinical research.

📈 Regression Analysis: The Predictive Medicine Framework

⏱️ Survival Analysis: The Time-to-Event Mastery System

Survival analysis addresses the unique challenges of time-to-event data, including censoring, competing risks, and time-varying covariates that standard statistical methods cannot handle appropriately.

📌 Remember: CHEW - Censoring handling, Hazard function modeling, Event time analysis, Weibull and other distributions. These components enable robust analysis of time-to-event clinical data with incomplete observations.

Censoring Types define incomplete observation patterns:

- Right censoring - Most common in clinical studies

- Event has not occurred by end of follow-up

- Patient lost to follow-up before event

- Assumption: Censoring independent of event probability

- Left censoring - Event occurred before observation began

- Rare in prospective studies

- Example: Disease onset before study enrollment

- Interval censoring - Event occurred within time interval

- Example: Disease recurrence between clinic visits

Survival Function S(t) describes probability of surviving beyond time t:

- Definition: S(t) = P(T > t) where T is survival time

- Properties:

- S(0) = 1 (everyone alive at start)

- S(∞) = 0 (eventually everyone dies)

- Non-increasing function

- Median survival: Time when S(t) = 0.5

Kaplan-Meier Estimator provides non-parametric survival estimation:

- Formula: Ŝ(t) = ∏(tᵢ≤t) [(nᵢ - dᵢ)/nᵢ]

- nᵢ: Number at risk at time tᵢ

- dᵢ: Number of events at time tᵢ

- Step function: Decreases only when events occur

- Confidence intervals: Ŝ(t) ± 1.96 × SE[Ŝ(t)]

- Assumptions: Independent censoring, homogeneous population

⭐ Clinical Pearl: Median survival is preferred over mean survival because it's less sensitive to outliers and censoring. When >50% of patients are censored, median survival cannot be estimated reliably.

Hazard Function h(t) describes instantaneous risk:

- Definition: h(t) = lim(Δt→0) P(t ≤ T < t+Δt | T ≥ t)/Δt

- Interpretation: Risk of event in next instant given survival to time t

- Relationship: S(t) = exp(-∫₀ᵗ h(u)du)

- Cumulative hazard: H(t) = ∫₀ᵗ h(u)du

| Survival Model | Hazard Function | Clinical Application | Key Parameter |

|---|---|---|---|

| Exponential | h(t) = λ | Constant risk over time | Rate λ |

| Weibull | h(t) = λγt^(γ-1) | Increasing/decreasing risk | Shape γ, Scale λ |

| Log-normal | Complex form | Early peak then decline | μ, σ parameters |

| Cox | h(t) = h₀(t)exp(βX) | Covariate effects | Baseline h₀(t) |

- Model: h(t|X) = h₀(t) × exp(β₁X₁ + β₂X₂ + ... + βₖXₖ)

- Hazard ratio: HR = exp(βᵢ) for covariate Xᵢ

- Interpretation:

- HR = 2.0: Doubling of hazard (risk)

- HR = 0.5: Halving of hazard (protective)

- Assumptions: Proportional hazards over time

- Advantage: No need to specify baseline hazard distribution

💡 Master This: Proportional hazards assumption requires hazard ratios to remain constant over time. Test using Schoenfeld residuals or log-log plots. Violation requires time-varying coefficients or stratified Cox models.

Log-rank Test compares survival between groups:

- Null hypothesis: S₁(t) = S₂(t) for all t

- Test statistic: Follows χ² distribution with df = groups - 1

- Assumptions: Proportional hazards, independent censoring

- Power: Optimal when hazards are proportional

- Alternative: Wilcoxon test for early differences

Competing Risks occur when multiple events can terminate follow-up:

- Definition: Occurrence of one event prevents observation of others

- Example: Death from cardiovascular disease vs. cancer

- Cumulative incidence function: CIF(t) = P(T ≤ t, cause = k)

- Gray's test: Compares cumulative incidence between groups

- Fine-Gray model: Regression for competing risks

⭐ Clinical Pearl: Number needed to treat (NNT) from survival data: NNT = 1/[CIF₁(t) - CIF₂(t)] where CIF is cumulative incidence function. This translates survival differences into clinically meaningful treatment benefits.

Sample Size Calculation for survival studies:

- Required events: E = (Z_{α/2} + Z_β)²/[ln(HR)]²

- Total sample size: N = E/[p₁ × P(event in group 1) + p₂ × P(event in group 2)]

- Factors affecting power:

- Hazard ratio magnitude: Larger HR easier to detect

- Event rate: Higher rates provide more power

- Follow-up duration: Longer follow-up increases events

- Loss to follow-up: Reduces effective sample size

Advanced Survival Techniques:

- Frailty models: Account for unobserved heterogeneity

- Joint models: Combine longitudinal and survival data

- Cure models: Handle populations with immune fraction

- Accelerated failure time: Alternative to proportional hazards

⭐ Clinical Pearl: Restricted mean survival time (RMST) provides interpretable alternative to hazard ratios when proportional hazards assumption fails. RMST represents average survival time up to specified time point, with differences having clear clinical meaning.

Connect these survival analysis principles through clinical mastery frameworks to synthesize comprehensive biostatistical expertise for evidence-based medical practice.

⏱️ Survival Analysis: The Time-to-Event Mastery System

🎯 The Biostatistics Mastery Arsenal: Your Clinical Evidence Toolkit

📌 Remember: MASTER - Methodology selection, Assumption verification, Sample size optimization, Test execution, Effect size interpretation, Results communication. These six pillars ensure rigorous statistical practice in clinical research.

Essential Statistical Arsenal for clinical practice:

- Descriptive Statistics Mastery

- Central tendency: Mean (μ), median, mode selection based on distribution

- Dispersion measures: Standard deviation (σ), IQR for robust analysis

- Distribution assessment: Skewness, kurtosis, normality testing

- Clinical thresholds: 95% reference intervals, percentile rankings

- Hypothesis Testing Framework

- Error control: α = 0.05, Power ≥ 80%, multiple comparison correction

- Test selection: Parametric vs. non-parametric based on assumptions

- Effect size: Cohen's d, odds ratios, hazard ratios with confidence intervals

- Clinical significance: NNT, NNH, absolute risk reduction

- Regression Modeling Expertise

- Variable selection: Clinical relevance, statistical significance, multicollinearity

- Model validation: Cross-validation, bootstrap, external validation

- Assumption checking: Linearity, independence, homoscedasticity, normality

- Prediction accuracy: AUC, calibration, discrimination metrics

| Analysis Type | Sample Size Rule | Power Requirement | Effect Size | Clinical Application |

|---|---|---|---|---|

| t-test | n ≥ 30 per group | 80% | d = 0.5 | Treatment comparison |

| Chi-square | Expected ≥ 5 | 80% | OR = 2.0 | Risk factor analysis |

| ANOVA | n ≥ 15 per group | 80% | η² = 0.14 | Multiple group comparison |

| Regression | 10-15 per variable | 80% | R² = 0.13 | Outcome prediction |

| Survival | 10 events per variable | 80% | HR = 2.0 | Time-to-event analysis |

Critical Numbers for Clinical Practice:

- Significance thresholds: p < 0.05 (moderate), p < 0.01 (strong), p < 0.001 (very strong)

- Confidence intervals: 95% standard, 99% for critical decisions

- Effect sizes: Small (d = 0.2, OR = 1.5), Medium (d = 0.5, OR = 2.5), Large (d = 0.8, OR = 4.0)

- Sample size factors: Power ≥ 80%, α ≤ 0.05, β ≤ 0.20

- Survival metrics: Median survival, 5-year survival rate, hazard ratios

💡 Master This: Bayesian thinking updates clinical beliefs with new evidence. Prior probability × Likelihood ratio = Posterior probability. This framework integrates clinical experience with statistical evidence for optimal decision-making.

Quality Assessment Framework:

- Study validity: Internal validity (bias control), external validity (generalizability)

- Statistical rigor: Appropriate methods, assumption verification, multiple comparison control

- Clinical relevance: MCID achievement, NNT calculation, cost-effectiveness

- Evidence strength: Effect size magnitude, confidence interval width, replication

⭐ Clinical Pearl: Publication bias favors statistically significant results, creating false positive literature. Funnel plots and fail-safe N calculations assess publication bias impact on meta-analyses and systematic reviews.

Advanced Integration Techniques:

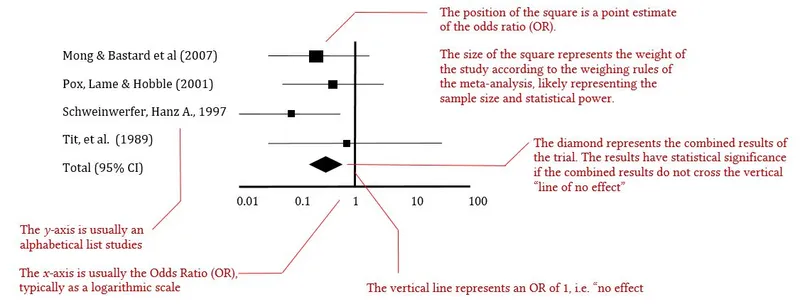

- Meta-analysis: Combines multiple studies for increased power

- Network meta-analysis: Compares multiple treatments simultaneously

- Individual patient data: Enables subgroup analysis and time-to-event modeling

- Machine learning: Handles high-dimensional data and complex interactions

- Causal inference: Establishes causality from observational data

⭐ Clinical Pearl: Heterogeneity assessment in meta-analysis uses I² statistic: I² < 25% (low), 25-75% (moderate), >75% (high heterogeneity). High heterogeneity suggests random-effects models and subgroup analysis are needed.

🎯 The Biostatistics Mastery Arsenal: Your Clinical Evidence Toolkit

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more

Have doubts about this lesson?

Ask Rezzy, your AI Study Mate, to explain anything you didn't understand

Everything you need for NEET-PG prep

Get full Oncourse access with lessons, practice questions, flashcards and AI study tools.

Scan to download app