Back

Medical Exam Active Recall: How Oncourse AI Makes Recall Practice Automatic

Discover how Oncourse AI automates active recall for medical exams. Learn why scheduled, targeted retrieval beats willpower-based study methods for NEET-PG, USMLE, UKMLA success.

Medical Exam Active Recall: How Oncourse AI Makes Recall Practice Automatic

You probably spent 4 hours yesterday reviewing nephrology notes and still couldn't recall the diagnostic criteria for nephrotic syndrome during today's quiz. Sound familiar? You're not experiencing brain failure — you're experiencing the predictable result of passive review masquerading as serious study.

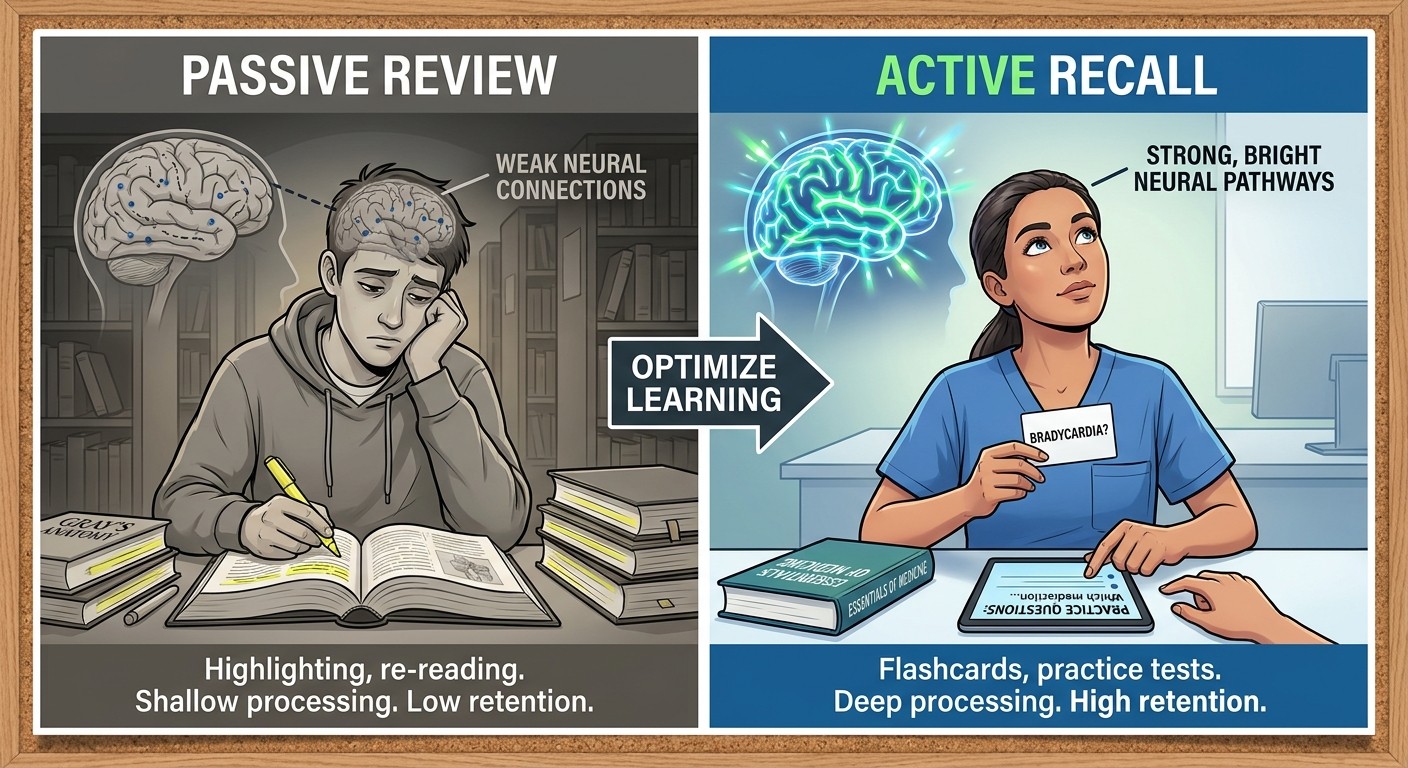

Medical exam active recall works. The science is bulletproof: retrieving information from memory strengthens neural pathways more effectively than reading the same material 10 times. But here's where most students fail: they treat active recall like a willpower exercise. They try to remember to quiz themselves, struggle to identify what needs drilling, and abandon the method when life gets chaotic.

Active recall only delivers its promise when its scheduled, targeted, and repeated automatically. When the system handles the logistics, your brain handles the learning.

What Makes Medical Exam Active Recall Actually Work

Active recall isn't just testing yourself after study sessions. Its a systematic approach to memory strengthening that requires three components most students never implement correctly: precise scheduling, targeted retrieval, and automatic repetition.

Research from Yale Journal of Biology and Medicine confirms that medical students using structured retrieval practice retain 40% more information after 6 months compared to traditional review methods — while spending less total study time.

The mechanism is straightforward. When you force your brain to retrieve information without looking at source material first, you create stronger neural pathways than recognition-based learning ever could. But the implementation is where students consistently fail.

Why Willpower-Based Active Recall Fails

Most students approach active recall like this: "I'll quiz myself on cardiology after I finish reading this chapter." Then life happens. The chapter takes longer than expected. They're tired. The quiz gets skipped. Three days later, they've forgotten half of what they read and never strengthened any retrieval pathways.

The problem isn't motivation — its relying on human memory and willpower to manage a memory-strengthening system. Your brain cant be both the student and the scheduler.

The Science Behind Automated Recall Scheduling

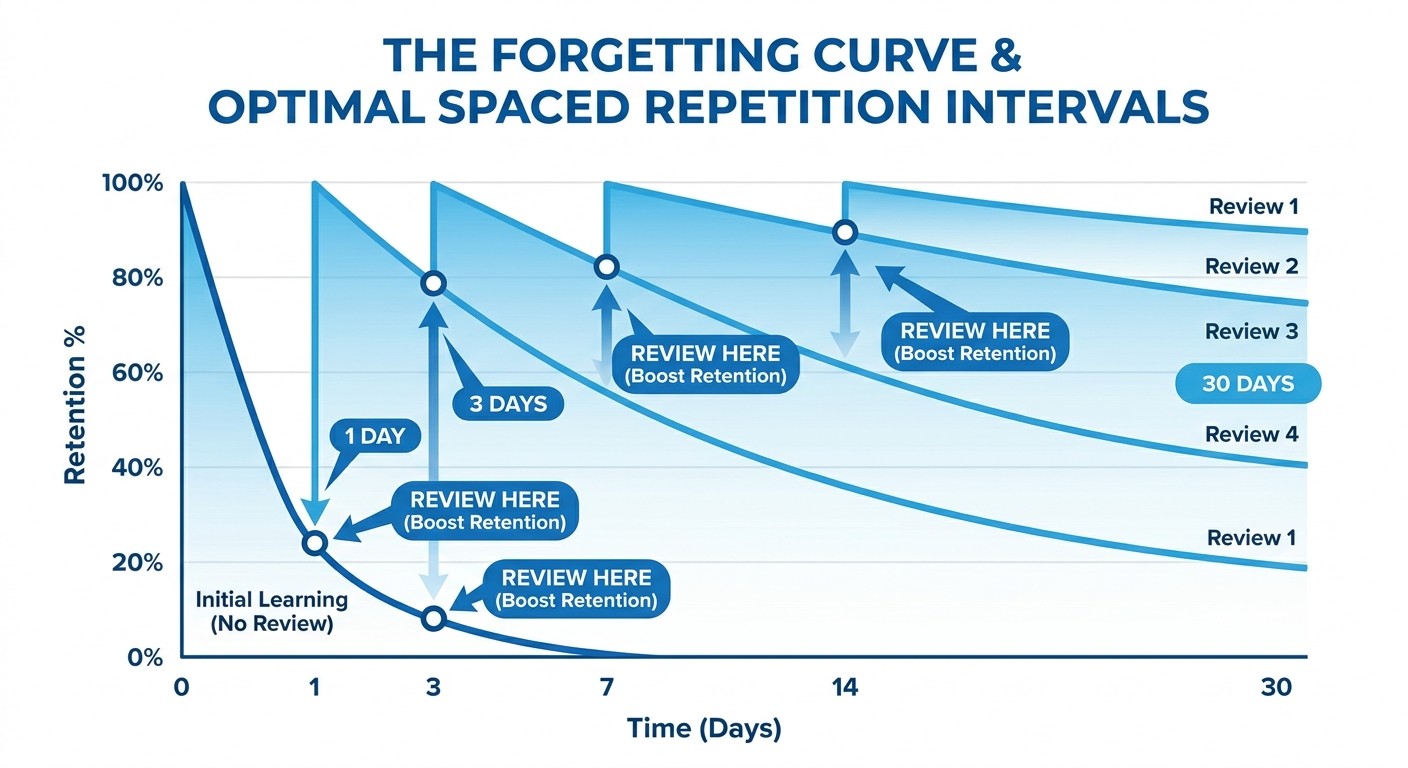

Ebbinghaus's forgetting curve shows that you lose approximately 50% of new information within 24 hours without reinforcement. But the curve isn't uniform across topics or individuals. You might retain USMLE pharmacology for 5 days but forget NEET-PG anatomy concepts after 36 hours.

Recent spaced repetition research in medical education reveals that optimal review timing varies based on three factors:

1. Initial encoding strength - How well you understood the concept initially

2. Personal retention patterns - Your individual forgetting curve for different subjects

3. Retrieval success rate - How consistently you've recalled this information previously

A 7-day interval works well for anatomy facts, but INICET clinical reasoning patterns might need 3-day cycles initially, then extend to 14-day intervals once mastered.

The math is complex, but the principle is simple: review each piece of information just before you would naturally forget it.

How Oncourse AI's Adaptive Daily Plan Automates Recall Practice

Traditional study schedules ignore your actual performance data. They assume you forget everything at the same rate and need identical review frequencies for all subjects.

Oncourse's Adaptive Daily Plan works differently. It converts your weak-area data into daily recall tasks automatically, so you know exactly what to retrieve each day without guessing or planning.

The system tracks three data streams that manual scheduling cant handle:

Accuracy patterns by specialty: If you're missing 7 out of 10 FMGE obstetrics questions but scoring 9/10 on pediatrics, it prioritizes obstetrics recall sessions over pediatrics review. Individual forgetting curves: The algorithm measures how quickly your accuracy drops for each UPSC-CMS subject when you dont review it, then schedules the next recall session at the optimal moment. Predicted vs actual retention: It doesn't just react to yesterday's mistakes — it predicts what you're likely to forget tomorrow and proactively schedules recall to prevent that forgetting.

Every morning, you get a personalized list of exactly which concepts need active retrieval today. No planning, no guesswork, no willpower required.

Converting Passive Review Into Exam-Style Retrieval Practice

The biggest barrier to effective medical exam active recall isn't understanding the theory — its converting traditional study materials into retrieval formats. Most students know they should quiz themselves but dont know how to create effective retrieval practice from textbook content.

Oncourse's adaptive question bank solves this conversion problem automatically. Instead of hoping you'll create good quiz questions from your NEET-PG pathology notes, the system presents tagged questions that target your weak areas through exam-style retrieval.

Here's why this matters: UKMLA questions don't ask you to recite drug mechanisms — they embed that knowledge in clinical vignettes requiring active retrieval under pressure. When you practice with "A 45-year-old presents with chest pain and diaphoresis. ECG shows ST elevation. Which medication provides the greatest mortality benefit?", you're strengthening the same retrieval pathways the actual exam will test.

The routing system ensures weak areas get more retrieval opportunities. If your USMLE cardiology accuracy is 73% but your nephrology sits at 91%, you'll see more cardiology questions requiring active recall until the performance gap closes.

Automated Spaced Repetition for High-Risk Concepts

Manual spaced repetition sounds appealing until you try tracking review schedules for 4,000 medical facts across 12 specialties. Which antimicrobials need review today? When did you last drill cranial nerve functions? What about that tricky enzyme pathway you keep confusing?

Oncourse's Synapses feature handles this complexity automatically. When you miss a question about beta-blocker contraindications, that concept gets scheduled for review in 2 days. Get it right? The interval extends to 5 days. Miss it again? Back to 1 day.

The system particularly focuses on high-risk concepts — information you've gotten wrong multiple times or topics that consistently trip up students preparing for your target exam. A pneumonia mnemonic that works for FMGE might need different retrieval patterns than UKMLA clinical reasoning scenarios.

Instead of hoping you'll remember to review weak areas, the spaced repetition algorithm reschedules missed concepts automatically so recall practice happens before forgetting wins.

Active Recall Implementation Across Different Medical Exams

Different medical exams require different active recall approaches, but the underlying principle remains consistent: force retrieval, measure retention, adjust scheduling.

NEET-PG Active Recall Strategy

NEET-PG emphasizes factual recall across broad specialties with high-yield pattern recognition. Effective active recall focuses on:

Drug mechanisms through clinical scenarios rather than isolated facts

Diagnostic criteria retrieval under time pressure (90 seconds per question)

Cross-specialty connections that mirror exam question patterns

USMLE Step Preparation

USMLE questions embed factual knowledge in multi-step clinical reasoning. Active recall should target:

Pathophysiology chains that connect basic science to clinical presentation

Treatment algorithms through case-based retrieval rather than isolated drug lists

Diagnostic reasoning patterns that appear across multiple Step levels

UKMLA AKT and CPSA Approach

UKMLA emphasizes applied clinical knowledge and patient management. Effective retrieval practice includes:

Guideline-based management through realistic clinical scenarios

Communication skills concepts through situational recall prompts

Risk factor identification in context rather than as isolated lists

INICET and FMGE Focus Areas

These exams test broad medical knowledge with emphasis on Indian clinical patterns. Active recall should emphasize:

Tropical disease presentations that dont appear in international question banks

Indian-specific treatment protocols and drug availability patterns

Public health concepts through case-based retrieval scenarios

UPSC-CMS Comprehensive Coverage

This exam requires deep knowledge across all medical specialties with analytical thinking. Retrieval practice should target:

Complex pathophysiology through multi-organ system scenarios

Evidence-based medicine principles in clinical decision-making contexts

Specialty-specific diagnostic approaches through systematic recall drills

Measuring Active Recall Effectiveness in Medical Exam Prep

How do you know if your medical exam active recall system is working? Most students rely on intuitive feelings of confidence or short-term quiz performance, but these metrics dont predict exam success.

Effective measurement requires tracking three key indicators:

Retention decay rates: How quickly your accuracy drops when you dont review a subject. If your pharmacology scores fall from 85% to 65% after one week without review, that topic needs more frequent retrieval sessions. Cross-topic interference: Whether learning new concepts weakens retrieval of previously mastered material. Strong active recall systems show minimal interference — your cardiology knowledge shouldn't degrade when you add respiratory pathology. Retrieval speed under pressure: Time-to-correct-answer during practice sessions. NEET-PG gives you 90 seconds per question; if you need 45 seconds to recall basic drug mechanisms, you won't have enough time for clinical reasoning.

The goal isn't perfect recall of every fact — its building retrieval pathways strong enough to access information quickly during high-stakes exams.

Why Most Active Recall Systems Break Down

Students abandon active recall not because the science is wrong, but because manual implementation is unsustainable. Common breakdown points include:

Scheduling complexity: Tracking optimal review intervals for thousands of medical concepts overwhelms even organized students. Content conversion barriers: Most study materials aren't designed for retrieval practice, requiring time-intensive conversion into quiz formats. Performance blind spots: Students cant objectively identify which concepts need more retrieval practice versus which are already well-consolidated. Motivation dependence: Relying on willpower to maintain consistent recall practice fails when exam stress peaks.

The solution isn't better willpower — its automation that removes human decision-making from the logistics while preserving human effort for the actual learning.

Frequently Asked Questions

How long should each active recall session last for medical exams?

Research suggests 25-45 minute focused retrieval sessions work best for medical content. Longer sessions lead to fatigue that reduces retrieval quality; shorter sessions don't allow enough time for complex clinical scenarios. The key is consistent daily practice rather than marathon weekend sessions.

Can active recall replace reading medical textbooks entirely?

No, but it should become your primary learning method after initial exposure. Read to understand, then immediately convert that understanding into retrieval practice. The ratio should shift toward 70% active recall and 30% new content consumption as you progress through your prep timeline.

Which subjects benefit most from automated active recall scheduling?

High-volume factual subjects like pharmacology, anatomy, and microbiology show the biggest gains from automated scheduling. Clinical subjects like internal medicine and surgery benefit more from case-based active recall that mimics exam question formats.

How quickly should I expect to see improvement in exam scores?

Most students see measurable improvement in practice test scores within 2-3 weeks of consistent active recall practice. Long-term retention improvements become apparent after 6-8 weeks. The key is trusting the process during the initial difficulty phase when retrieval feels challenging.

Does active recall work for clinical reasoning or just fact memorization?

Active recall is actually more effective for clinical reasoning than pure memorization. When you practice retrieving diagnostic approaches and treatment algorithms through case scenarios, you strengthen the same cognitive pathways that exam questions will test.

What's the difference between active recall and just doing practice questions?

Practice questions can be passive if you're just recognizing correct answers without truly retrieving knowledge. Effective active recall requires attempting retrieval before seeing answer choices, explaining your reasoning process, and reviewing incorrect answers through additional retrieval attempts rather than simple re-reading.

Prepare smarter with Oncourse AI — adaptive MCQs, spaced repetition, and AI explanations built for medical exam success. Download free on Android and iOS.