Effect Size - Beyond P-Value Power

- Effect size measures the magnitude of an intervention's effect or the strength of a relationship, unlike p-value, which only indicates if an effect is statistically significant (not due to chance).

- It answers, "How much does it matter?" not just "Is there an effect?". It is a key indicator of clinical significance.

- Common Measures:

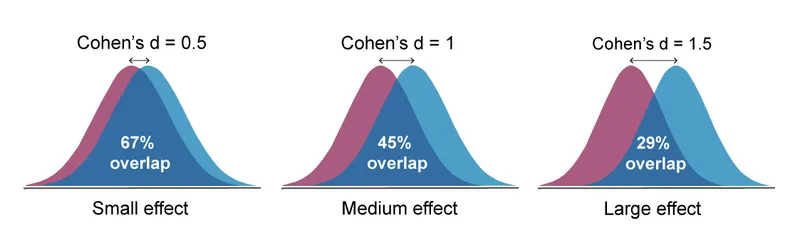

- Cohen's d: For differences between two means. Benchmarks: Small (0.2), Medium (0.5), Large (0.8).

- Odds Ratio (OR) / Relative Risk (RR): For proportions in categorical data.

- Correlation Coefficient (r): For strength of association between two variables.

⭐ A very large sample size can make a clinically insignificant effect statistically significant (e.g., p < 0.05), but the effect size will remain small, revealing its limited practical importance.

Quantifying Impact - The Effect Size Zoo

-

Effect Size: Measures the magnitude of an intervention's effect or a relationship's strength. It is independent of sample size and crucial for assessing clinical significance, unlike the p-value.

-

Common Metrics for Group Differences:

- Cohen's d: Standardized difference between two means.

- Interpretation: Small (0.2), Medium (0.5), Large (0.8).

- Cohen's d: Standardized difference between two means.

-

Common Metrics for Association (Ratios):

- Odds Ratio (OR): Used in case-control studies. Odds of an event in one group vs. another.

- Relative Risk (RR): Used in cohort studies. Probability of an event in an exposed group vs. an unexposed group.

- Hazard Ratio (HR): Used in survival analysis. Instantaneous risk over time.

-

Interpreting Ratios (OR, RR, HR):

-

1: ↑ risk/odds.

- < 1: ↓ risk/odds (protective).

- = 1: No difference.

-

⭐ For rare diseases (low prevalence), the Odds Ratio (OR) from a case-control study closely approximates the Relative Risk (RR).

Clinical Significance - The 'So What?' Test

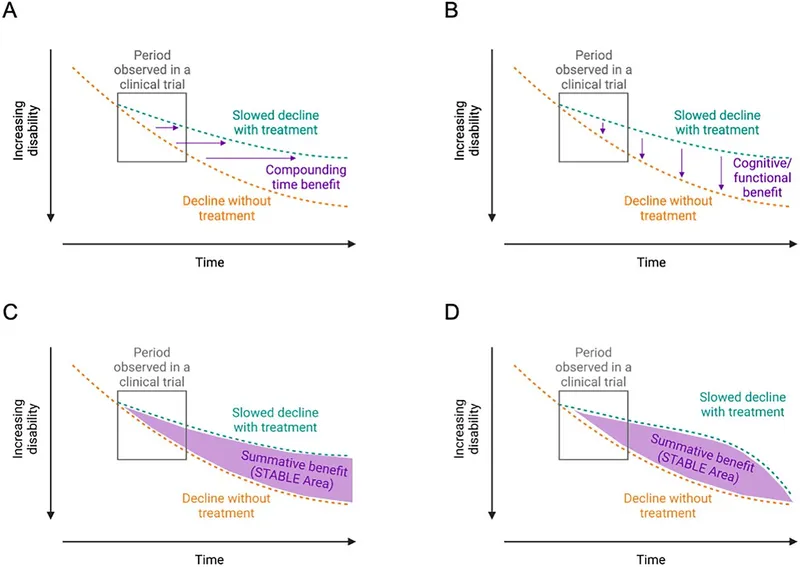

- Statistical significance (p < 0.05) indicates if an effect is likely due to chance, but not its magnitude or importance.

- Clinical significance asks if the effect is meaningful for patients and changes clinical practice. It's the "so what?" test.

- Assessed using the Minimal Clinically Important Difference (MCID): the smallest change in a treatment outcome that a patient would identify as important.

⭐ A study with a massive sample size can show a statistically significant result (e.g., a tiny drop in blood pressure) that is too small to be clinically meaningful.

- Statistical significance (p-value < 0.05) does not equal clinical significance.

- Effect size (e.g., Cohen's d, odds ratio) measures the magnitude of a difference, informing clinical relevance.

- Large sample sizes can yield statistically significant results for clinically meaningless effects.

- Confidence intervals help assess both statistical significance and the precision of the effect estimate.

- Clinical significance is a judgment call, considering the effect's magnitude, risks, benefits, and costs.

Unlock the full lesson and continue reading

Signup to continue reading this lesson and unlimited access questions, flashcards, AI notes, and more